“We need much more honesty, about what data is being collected and about the inferences that they’re going to make about people. We need to be able to ask the university ‘What do you think you know about me?’”

- Sep 2016

-

www.theguardian.com www.theguardian.com

-

-

-

The importance of models may need to be underscored in this age of “big data” and “data mining”. Data, no matter how big, can only tell you what happened in the past. Unless you’re a historian, you actually care about the future — what will happen, what could happen, what would happen if you did this or that. Exploring these questions will always require models. Let’s get over “big data” — it’s time for “big modeling”.

-

Readers are thus encouraged to examine and critique the model. If they disagree, they can modify it into a competing model with their own preferred assumptions, and use it to argue for their position. Model-driven material can be used as grounds for an informed debate about assumptions and tradeoffs. Modeling leads naturally from the particular to the general. Instead of seeing an individual proposal as “right or wrong”, “bad or good”, people can see it as one point in a large space of possibilities. By exploring the model, they come to understand the landscape of that space, and are in a position to invent better ideas for all the proposals to come. Model-driven material can serve as a kind of enhanced imagination.

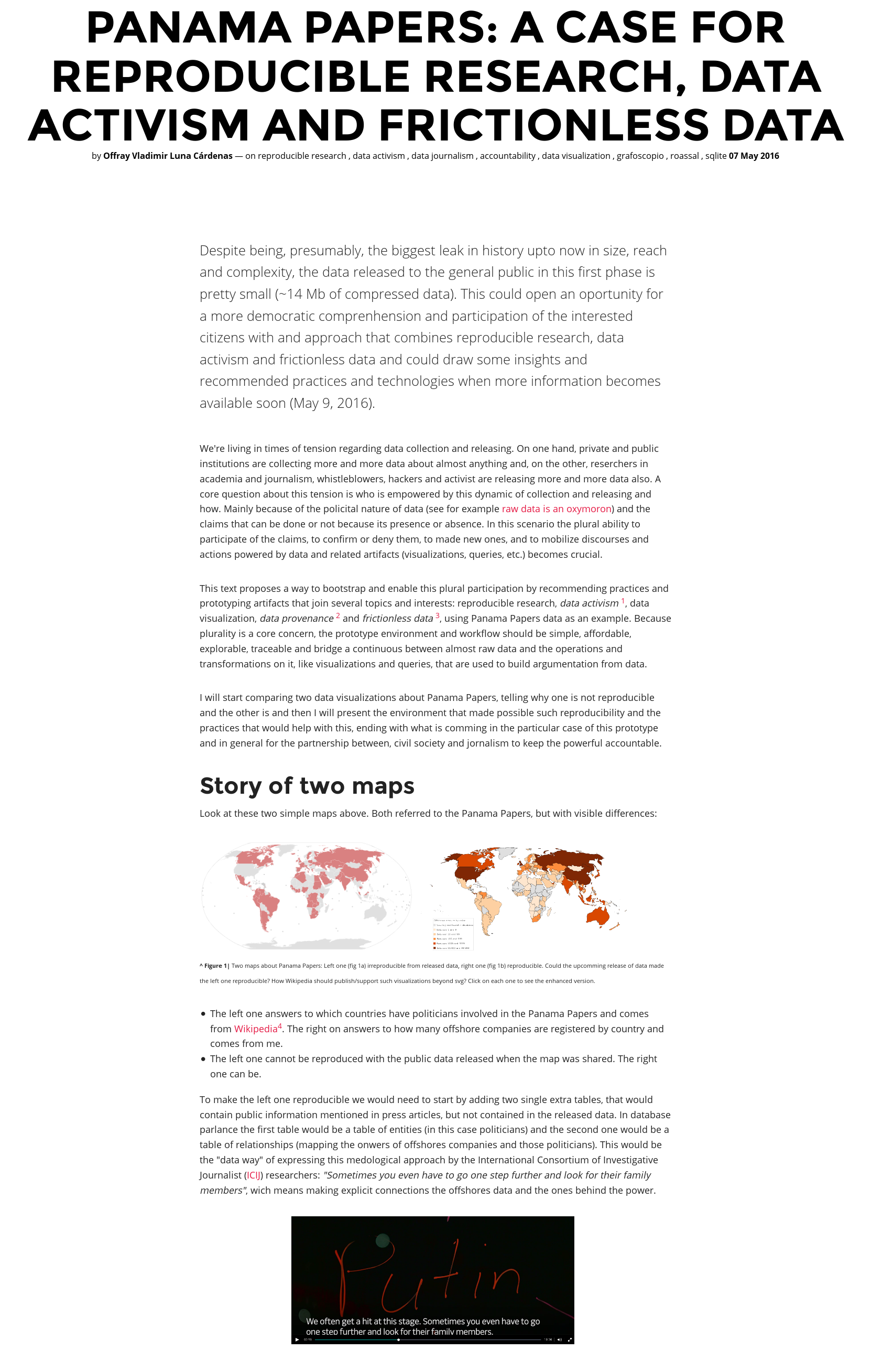

This is a part where my previous comments on data activism data journalism (see 1,2 & 3) and more plural computing environments for engagement of concerned citizens on the important issues of our time could intersect with Victor's discourse.

-

The Gamma: Programming tools for data journalism

(b) languages for novices or end-users, [...] If we can provide our climate scientists and energy engineers with a civilized computing environment, I believe it will make a very significant difference.

But data journalists, and in fact, data activist, social scientist, and so on, could be a "different type of novice", one that is more critically and politically involved (in the broader sense of the "politic" word).

The wider dialogue on important matters that is mediated, backed up and understood by dealing data, (as climate change) requires more voices that the ones are involved today, and because they need to be reason and argument using data, we need to go beyond climate scientist or energy engeeners as the only ones who need a "civilized computing environment" to participate in the important complex and urgent matters of today world. Previously, these more critical voices (activists, journalists, scientists) have helped to make policy makers accountable and more sensible on other important and urgent issues.

In that sense my work with reproducible research in my Panama Papers as a prototype of a data continuum environment, or others, like Gamma, could serve as an exploration, invitation and early implementation of what is possible to enrich this data/computing enhanced dialogue.

-

I say this despite the fact that my own work has been in much the opposite direction as Julia. Julia inherits the textual interaction of classic Matlab, SciPy and other children of the teletype — source code and command lines.

The idea of a tradition technologies which are "children of teletype" is related to the comparison we do in the data week workshop/hackathon. In our case we talk about "unix fathers" versus "dynabook children" and bifurcation/recombination points of this technologies:

-

If efficiency incentives and tools have been effective for utilities, manufacturers, and designers, what about for end users? One concern I’ve always had is that most people have no idea where their energy goes, so any attempt to conserve is like optimizing a program without a profiler.

-

The catalyst for such a scale-up will necessarily be political. But even with political will, it can’t happen without technology that’s capable of scaling, and economically viable at scale. As technologists, that’s where we come in.

May be we come before, by enabling this conversation (as said previously). Political agenda is currently coopted by economical interests far away of a sustainable planet or common good. Feedback loops can be a place to insert counter-hegemonic discourse to enable a more plural and rational dialogue between civil society and goverment, beyond short term economic current interest/incumbents.

-

This is aimed at people in the tech industry, and is more about what you can do with your career than at a hackathon. I’m not going to discuss policy and regulation, although they’re no less important than technological innovation. A good way to think about it, via Saul Griffith, is that it’s the role of technologists to create options for policy-makers.

Nice to see this conversation happening between technology and broader socio-political problems so explicit in Bret's discourse.

What we're doing in fact is enabling this conversation between technologist and policy-makers first, and we're highlighting it via hackathon/workshops, but not reducing it only to what happens there (an interesting critique to the techno-solutionism hackathon is here), using the feedback loops in social networks, but with an intention of mobilizing a setup that goes beyond. One example is our twitter data selfies (picture/link below). The necesity of addressing urgent problem that involve techno-socio-political complex entanglements is more felt in the Global South.

^ Up | Twitter data selfies: a strategy to increase the dialog between technologist/hackers and policy makers (click here for details).

-

- Aug 2016

-

www.dati.gov.it www.dati.gov.it

-

DATA GOVERNANCE

la Data Governance fa pensare ad una Pubblica Amministrazione come unico organismo pensante e decisorio. Un concetto facile da metabolizzare, ma che non rispecchia spesso l'architettura reale delle PA di grandi dimensioni come i Comuni capoluogo, ad esempio.

La Data Governance parte da una PA che ha progettato o implementato la sua piattaforma informatica di 1) gestione dei flussi di lavoro interni e 2) gestione di servizi erogati all'utenza, in maniera tale da eliminare totalmente l'uso del supporto cartaceo e da permettere esclusivamente il data entry sia internamente dagli uffici che dall'utenza che richiede servizi pubblici agli enti pubblici. La Data Governance può essere adeguatamente ed efficacemente attuata solo se nella PA si tiene conto di questi elementi anzidetti. In merito colgo l'occasione per citare le 7 piattaforme ICT che le 14 grandi città metropolitane italiane devono realizzare nel contesto del PON METRO. Ecco questa si presenta come un occasione per le 14 grandi città italiane di dotarsi della stessa DATA GOVERNANCE, visto che le 7 piattaforme ICT devono (requisito) essere interoperabili tra loro. La Data Governance si crea insieme alla progettazione delle piattaforme informatiche che permettono alla PA di "funzionare" nei territori. La Data Governance è indissolubilmente legata al "data entry". Il data entry non prevede scansioni di carta o gestione di formati di lavoro non aperti. La Data Governance nelle sue procedure operative quotidiana è alla base della politica open data di qualità. Una Data Governance della PA nel 2016-17-... non può ancora fondarsi nella costruzione manuale del formato CSV e relativa pubblicazione manuale ad opera del dipendente pubblico. Una Data Governance dovrebbe tenere in considerazione che le procedure di pubblicazione dei dataset devono essere automatiche e derivanti dalle funzionalità degli stessi applicativi gestionali (piattaforme ICT) in uso nella PA, senza alcun intervento umano se non nella fase di filtraggio/oscuramento dei dati che afferiscono alla privacy degli individui.

-

-

www.cdc.gov www.cdc.gov

-

Credibull score = 9.60 / 10

To provide feedback on the score fill in the form available here

What is Credibull? getcredibull.com

Tags

Annotators

URL

-

-

books.google.ca books.google.ca

-

Page 122

Borgman on terms used by the humanities and social sciences to describe data and other types of analysis

humanist and social scientists frequently distinguish between primary and secondary information based on the degree of analysis. Yet this ordering sometimes conflates data, sources, and resources, as exemplified by a report that distinguishes "primary resources, E. G., Books close quotation from quotation secondary resources, eat. Gee., Catalogs close quotation . Resources also categorized as primary or sensor data, numerical data, and field notebooks, all of which would be considered data in the sciences. Rarely would books, conference proceedings, and feces that the report categorizes as primary resources be considered data, except when used for text-or data-mining purposes. Catalogs, subject indices, citation indexes, search engines, and web portals were classified as secondary resources. These are typically viewed as tertiary resources in the library community because they describe primary and secondary resources. The distinctions between data, sources, and resources very by discipline and circumstance. For the purposes of this book, primary resources are data, secondary resources are reports of research, whether publications or intern forms, and tertiary resources are catalogs, indexes, and directories that provide access to primary and secondary resources. Sources are the origins of these resources.

-

Page XVIII

Borgman notes that no social framework exist for data that is comparable to this framework that exist for analysis. CF. Kitchen 2014 who argues that pre-big data, we privileged analysis over data to the point that we threw away the data after words . This is what creates the holes in our archives.

He wonders capabilities [of the data management] must be compared to the remarkably stable scholarly communication system in which they exist. The reward system continues to be based on publishing journal articles, books, and conference papers. Peer-reviewed legitimizes scholarly work. Competition and cooperation are carefully balanced. The means by which scholarly publishing occurs is an unstable state, but the basic functions remained relatively unchanged. while capturing and managing the "data deluge" is a major driver of the scholarly infrastructure developments, no Showshow same framework for data exist that is comparable to that for publishing.

-

- Jul 2016

-

books.google.ca books.google.ca

-

Page 220

Humanistic research takes place in a rich milieu that incorporates the cultural context of artifacts. Electronic text and models change the nature of scholarship in subtle and important ways, which have been discussed at great length since the humanities first began to contemplate the scholarly application of computing.

-

Page 217

Methods for organizing information in the humanities follow from their research practices. Humanists fo not rely on subject indexing to locate material to the extent that the social sciences or sciences do. They are more likely to be searching for new interpretations that are not easily described in advance; the journey through texts, libraries, and archives often is the research.

-

Page 223

Borgman is discussing here the difference in the way humanists handle data in comparison to the way that scientists and social scientist:

When generating their own data such as interviews or observations, human efforts to describe and represent data are comparable to that of scholars and other disciplines. Often humanists are working with materials already described by the originator or holder of the records, such as libraries, archives, government agencies, or other entities. Whether or not the desired content already is described as data, scholars need to explain its evidentiary value in your own words. That report often becomes part of the final product. While scholarly publications in all fields set data within a context, the context and interpretation are scholarship in the humanities.

-

Pages 220-221

Digital Humanities projects result in two general types of products. Digital libraries arise from scholarly collaborations and the initiatives of cultural heritage institutions to digitize their sources. These collections are popular for research and education. … The other general category of digital humanities products consist of assemblages of digitized cultural objects with associated analyses and interpretations. These are the equivalent of digital books in that they present an integrated research story, but they are much more, as they often include interactive components and direct links to the original sources on which the scholarship is based. … Projects that integrate digital records for widely scattered objects are a mix of a digital library and an assemblage.

-

Page 219

In the humanities, it is difficult to separate artifacts from practices or publications from data.

-

Page 219

Humanities scholars integrate and aggregate data from many sources. They need tools and services to analyze digital data, as others do the sciences and social sciences, but also tools that assist them interpretation and contemplation.

-

Page 215

What seems a clear line between publications and data in the sciences and social sciences is a decidedly fuzzy one in the humanities. Publications and other documents are central sources of data to humanists. … Data sources for the humanities are innumerable. Almost any document, physical artifact, or record of human activity can be used to study culture. Humanities scholars value new approaches, and recognizing something as a source of data (e.g., high school yearbooks, cookbooks, or wear patterns in the floor of public places) can be an act of scholarship. Discovering heretofore unknown treasures buried in the world's archives is particularly newsworthy. … It is impossible to inventory, much less digitize, all the data that might be useful scholarship communities. Also distinctive about humanities data is their dispersion and separation from context. Cultural artifacts are bought and sold, looted in wars, and relocated to museums and private collections. International agreements on the repatriation of cultural objects now prevent many items from being exported, but items that were exported decades or centuries ago are unlikely to return to their original site. … Digitizing cultural records and artifacts make them more malleable and mutable, which creates interesting possibilities for analyzing, contextualizing, and recombining objects. Yet digitizing objects separates them from the origins, exacerbating humanists’ problems in maintaining the context. Removing text from its physical embodiment in a fixed object may delete features that are important to researchers, such as line and page breaks, fonts, illustrations, choices of paper, bindings, and marginalia. Scholars frequently would like to compare such features in multiple additions or copies.

-

Page 214

Borgman on information artifacts and communities:

Artifacts in the humanities differ from those of the sciences and social sciences in several respects. Humanist use the largest array of information sources, and as a consequence, the station between documents and data is the least clear. They also have a greater number of audiences for the data and the products of the research. Whereas scientific findings usually must be translated for a general audience, humanities findings often are directly accessible and of immediate interest to the general public.

-

Page 204

Borgman on the different types of data in the social sciences:

Data in the social sciences fall into two general categories. The first is data collected by researchers through experiments, interviews, surveys, observations, or similar names, analogous to scientific methods. … the second category is data collected by other people or institutions, usually for purposes other than research.

-

Page 202

Borgman on information artifacts in the social sciences

like the sciences, the social sciences create and use minimal information. Yet they differ in the sources of the data. While almost all scientific data are created by for scientific purposes, a significant portion of social scientific data consists of records credit for other purposes, by other parties.

-

Borgman, Christine L. 2007. Scholarship in the Digital Age: Information, Infrastructure, and the Internet. Cambridge, Mass: MIT Press.

-

Page 147

Borgman on the challenges facing the humanities in the age of Big Data:

Text and data mining offer similar Grand challenges in the humanities and social sciences. Gregory crane provide some answers to the question what do you do with a million books? Two obvious answers include the extraction of information about people, places, and events, and machine translation between languages. As digital libraries of books grow through scanning avert such as Google print, the open content Alliance, million books project, and comparable projects in Europe and China, and as more books are published in digital form technical advances in data description, and now it says, and verification are essential. These large collections differ from earlier, smaller after it's on several Dimensions. They are much larger in scale, the content is more heterogenous in topic and language, the granularity creases when individual words can be tagged and they were noisy then there well curated predecessors, and their audiences more diverse, reaching the general public in addition to the scholarly community. Computer scientists are working jointly with humanist, language, and other demands specialist to pars tax, extract named entities in places, I meant optical character recognition techniques counter and Advance the state of art of information retrieval.

-

Page 137

Borgman discusses hear the case of NASA which lost the original video recording of the first moon landing in 1969. Backups exist, apparently, but they are lower quality than the originals.

-

Page 122

Here Borgman suggest that there is some confusion or lack of overlap between the words that humanist and social scientists use in distinguishing types of information from the language used to describe data.

Humanist and social scientists frequently distinguish between primary and secondary information based on the degree of analysis. Yet this ordering sometimes conflates data sources, and resorces, as exemplified by a report that distinguishes quote primary resources, ed books quote from quote secondary resources, Ed catalogs quote. Resorts is also categorized as primary wear sensor data AMA numerical data and filled notebooks, all of which would be considered data in The Sciences. But rarely would book cover conference proceedings, and he sees that the report categorizes as primary resources be considered data, except when used for text or data mining purposes. Catalogs, subject indices, citation index is, search engines, and web portals were classified as secondary resources.

-

Pages 119 and 120

Here Borgman discusses the various definitions of data showing them working across the fields

the following definition of data is widely accepted in this context: AT&T portable representation of information in a formalized manner suitable for communication, interpretation, or processing. Examples of data include a sequence of bits, a table of numbers, the characters on a page, recording of sounds made by a person speaking Ori moon rocks specimen. Definitions of data often arise from Individual disciplines, but can apply to data used in science, technology, the social sciences, and the humanities: data are facts, numbers, letters, and symbols that describe an object, idea, condition, situation, or other factors.... Terms data and facts are treated interchangeably, as is the case in legal context. Sources of data includes observations, complications, experiment, and record-keeping. Observational data include weather measurements... And attitude surveys... Or involve multiple places and times. Computational data result from executing a computer model or simulation.... experimental data include results from laboratory studies such as measurements of chemical reactions or from field experiments such as controlled Behavioral Studies.... records of government, business, and public and private life also yield useful data for scientific, social scientific, and humanistic research.

-

Pages 117 to 1:19

Here Borgman discusses the ability to go back and forth between data and reports on data she cites Phil born 2005 on this for a while medicine. She also discusses how in the pre-digital error data was understood as a support mechanism for final publication and as a result was allowed to deteriorate or be destroyed after the Publications upon which they were based appeared.

-

Page 115

Borgman makes the point here that while there is a Commons in the infrastructure of scholarly publishing there is less of a Commons in the infrastructure 4 data across disciplines.

The infrastructure of scholarly publishing Bridges disciplines: every field produces Journal articles, conference papers, and books albeit in differing ratios. Libraries select, collect organize and make accessible publications of all types, from all fields. No comparable infrastructure exists for data. A few Fields have major mechanisms for publishing data in repositories. Some fields are in the stage of developing standards and practices to activate their data resorces and Nathan were widely accessible. In most Fields, especially Outside The Sciences, data practices remain local idiosyncratic, and oriented to current usage rather than preservation operation, and access. Most data collections Dash where they exist Dash are managed by individual agencies within disciplines, rather than by libraries are archives. Data managers usually are trained within the disciplines they serve. Only a few degree programs and information studies include courses on data management. The lack of infrastructure for data amplifies the discontinuities in scholarly publishing despite common concerns, independent debates continue about access to Publications and data.

-

Page 41

discussions of digital scholarship tend to distinguish implicitly or explicitly between data and documents. Some of you data and documents as a Continuum rather than a dichotomy in this sense data such as numbers images and observations are the initial products of research, and Publications are the final products that set research findings in context.

-

A great paragraph here on the value of interconnection

scholarly data and documents are of most value when they are interconnected rather than independent. The outcomes of a research project could be understood most fully if it were possible to trace an important finding from a grant proposal, to data collection, to a data set, to its publication, to its subsequent review and comment period journal articles are more valuable if one can jump directly from the article to those insights into later articles that cite the source article. Articles are even more valuable if they provide links to data on which they are based. Some of these capabilities already are available, but their expansion depends more on the consistency of the data description, access arrangements, and intellectual property agreement then on technological advances.

I think here of the line from Jim Gill may all your problems be technical

-

p. 8-actually this is link to p. 7, since 8 is excluded

Another trend is the blurring of the distinction between primary sources, generally viewed as unprocessed or unanalysed data, and secondary sources that set data in context.

Good point about how this is a new thing. On the next page she discusses how we are now collpasing the traditional distinction between primary and secondary sources.

-

Retrieval methods designed for small databases decline rapidly in effectiveness as collections grow...

This is an interesting point that is missed in the Distant reading controversies: its all very well to say that you prefer close reading, but close reading doesn't scale--or rather the methodologies used to decide what to close read were developed when big data didn't exist. How to you combine that when you can read everything. I.e. You close read Dickins because he's what survived the 19th C as being worth reading. But now, if we could recover everything from the 19th C how do you justify methodologically not looking more widely?

Tags

- Moretti

- digital libraries

- Disciplinary differences

- Definitions

- social sciences

- borgman 2007

- Borgman 2007

- Scholarly Commons

- Scholarly Communication

- disciplinary difference

- distant reading

- Borgman

- NASA

- Documents

- method

- Scholarly

- electronic texts

- Ramsay

- humanities data

- Fish

- citation

- primary sources

- digital humanities

- digital scholarship

- Culler

- citation practices

- methodology

- algorithmic criticism

- data

- humanities

- Humanities

- Data preservation

- Interconnection

- Data

- editions

- secondary sources

- Classification

Annotators

URL

-

-

books.google.ca books.google.ca

-

Page 14

Rockwell and Sinclair note that corporations are mining text including our email; as they say here:

more and more of our private textual correspondence is available for large-scale analysis and interpretation. We need to learn more about these methods to be able to think through the ethical, social, and political consequences. The humanities have traditions of engaging with issues of literacy, and big data should be not an exception. How to analyze interpret, and exploit big data are big problems for the humanities.

-

Page 14

Rockwell and Sinclair note that HTML and PDF documents account for 17.8% and 9.2% of (I think) all data on the web while images and movies account for 23.2% and 4.3%.

-

-

heretothere.trubox.ca heretothere.trubox.ca

-

“knowledge creation”.

The "business" of univeristy?

-

-

journals-openedition-org.accesdistant.sorbonne-universite.fr journals-openedition-org.accesdistant.sorbonne-universite.fr

-

big data

les algorithmes ont besoin de données soi-disant neutres.. c'est un peu aller dans le sens des discours d'accompagnement de ces algorithmes et services de recommandation qui considèrent leurs données "naturelles", sans valeur intrasèque. (voir Bonenfant 2015)

-

-

books.google.ca books.google.ca

-

Initially, the digital humanities consisted of the curation and analysis of data that were born digital, and the digitisation and archiving projects that sought to render analogue texts and material objects into digital forms that could be organised and searched and be subjects to basic forms of overarching, automated or guided analysis, such as summary visualisations of content or connections between documents, people or places. Subsequently, its advocates have argued that the field has evolved to provide more sophisticated tools for handling, searching, linking, sharing and analysing data that seek to complement and augment existing humanities methods, and facilitate traditional forms of interpretation and theory building, rather than replacing traditional methods or providing an empiricist or positivistic approach to humanities scholarship.

summary of history of digital humanities

-

Data are not useful in and of themselves. They only have utility if meaning and value can be extracted from them. In other words, it is what is done with data that is important, not simply that they are generated. The whole of science is based on realising meaning and value from data. Making sense of scaled small data and big data poses new challenges. In the case of scaled small data, the challenge is linking together varied datasets to gain new insights and opening up the data to new analytical approaches being used in big data. With respect to big data, the challenge is coping with its abundance and exhaustivity (including sizeable amounts of data with low utility and value), timeliness and dynamism, messiness and uncertainty, high relationality, semi-structured or unstructured nature, and the fact that much of big data is generated with no specific question in mind or is a by-product of another activity. Indeed, until recently, data analysis techniques have primarily been designed to extract insights from scarce, static, clean and poorly relational datasets, scientifically sampled and adhering to strict assumptions (such as independence, stationarity, and normality), and generated and alanysed with a specific question in mind.

Good discussion of the different approaches allowed/required by small v. big data.

-

25% of data stored in digital form in 2000 (the rest analogue; 94% by 2007

-

Kitchin, Rob. 2014. The Data Revolution. Thousand Oaks, CA: SAGE Publications Ltd.

-

-

books.google.ca books.google.ca

-

Kitchin, Rob. 2014. The Data Revolution. Thousand Oaks, CA: SAGE Publications Ltd.

-

-

www.clir.org www.clir.orgpub1711

-

digital data

are there non-digital data?

Tags

Annotators

URL

-

-

lawriephipps.co.uk lawriephipps.co.uk

-

The visualisation may look like data, but it is a snapshot of how I am connected, it is my rhizomatic digital landscape. For me it reinforces the fact that digital is people.

Really nice way to end the article.

I love Data = People :)

-

Everyone should acquire the skills to understand data, and analytics.

AMEN!

Tags

Annotators

URL

-

-

hybridpedagogy.org hybridpedagogy.org

-

what do we do with that information?

Interestingly enough, a lot of teachers either don’t know that such data might be available or perceive very little value in monitoring learners in such a way. But a lot of this can be negotiated with learners themselves.

-

turn students and faculty into data points

Data=New Oil

-

E-texts could record how much time is spent in textbook study. All such data could be accessed by the LMS or various other applications for use in analytics for faculty and students.”

-

not as a way to monitor and regulate

-

-

ideas.repec.org ideas.repec.org

-

Replication data for this study can be found in Harvard's Dataverse

Tags

Annotators

URL

-

-

hackeducation.com hackeducation.com

-

demanded by education policies — for more data

-

-

medium.com medium.com

-

data being collected about individuals for purposes unknown to these individuals

-

-

www.businessinsider.com www.businessinsider.com

-

Data collection on students should be considered a joint venture, with all parties — students, parents, instructors, administrators — on the same page about how the information is being used.

-

-

www.educationdive.com www.educationdive.com

-

there is some disparity and implicit bias

-

-

motherboard.vice.com motherboard.vice.com

-

The arrival of quantified self means that it's no longer just what you type that is being weighed and measured, but how you slept last night, and with whom.

-

-

blog.clever.com blog.clever.com

-

Limit retention to what is useful.

So what data does h retain?

- username

- email address

Do annotations count as data?

-

- Jun 2016

-

idlewords.com idlewords.com

-

Even if you trust everyone spying on you right now, the data they're collecting will eventually be stolen or bought by people who scare you. We have no ability to secure large data collections over time.

Fair enough.

And "Burn!!" on Microsoft with that link.

Tags

Annotators

URL

-

-

digitalhumanities.org digitalhumanities.org

-

Data in Digital Scholarship 23

Data in digital scholarship

-

-

blog.jonudell.net blog.jonudell.net

-

Annotation can help us weave that web of linked data.

This pithy statement brings together all sorts of previous annotations. Would be neat to map them.

-

-

www.forbes.com www.forbes.com

-

dynamic documents

A group of experts got together last year at Daghstuhl and wrote a white paper about this.

Basically the idea is that the data, the code, the protocol/analysis/method, and the narrative should all exist as equal objects on the appropriate platform. Code in a code repository like Github, Data in a data repo that understands data formats, like Mendeley Data (my company) and Figshare, protocols somewhere like protocols.io and the narrative which ties it all together still at the publisher. Discussion and review can take the form of comments, or even better, annotations just like I'm doing now.

-

-

Local file Local file

-

n a sample of 2,101 scientificpapers published between 1665 and 1800, Beaver andRosen found that 2.2% described collaborative work. No-table was the degree of joint authorship in astronomy,especially in situations where scientists were dependentupon observational data.

Astronomy was area of collaboration because they needed to share data

-

-

exposingtheinvisible.org exposingtheinvisible.org

-

What type of team do you need to create these visualisations? OpenDataCity has a special team of really high-level nerds. Experts on hardware, servers, software development, web design, user experience and so on. I contribute the more mathematical view on the data. But usually a project is done by just one person, who is chief and developer, and the others help him or her. So, it's not like a group project. Usually, it's a single person and a lot of help. That makes it definitely faster, than having a big team and a lot of meetings.

This strengths the idea that data visualization is a field where a personal approach is still viable, as is shown also by a lot of individuals that are highly valuated as data visualizers.

-

-

wiki.de.dariah.eu wiki.de.dariah.eu

-

List of publications on open access research data

nice bibliography!

-

- May 2016

-

en.wikipedia.org en.wikipedia.org

-

After graduating from MIT at the age of 29, Loveman began teaching at Harvard Business School, where he was a professor for nine years.[8][10] While at Harvard, Loveman taught Service Management and developed an interest in the service industry and customer service.[8][10] He also launched a side career as a speaker and consultant after a 1994 paper he co-authored, titled "Putting the Service-Profit Chain to Work", attracted the attention of companies including Disney, McDonald's and American Airlines. The paper focused on the relationship between company profits and customer loyalty, and the importance of rewarding employees who interact with customers.[7][8] In 1997, Loveman sent a letter to Phil Satre, the then-chief executive officer of Harrah's Entertainment, in which he offered advice for growing the company.[7] Loveman, who had done some consulting work for the company in 1991,[11] again began to consult for Harrah's and, in 1998, was offered the position of chief operating officer.[8] He initially took a two year sabbatical from Harvard to take on the role of COO of Harrah's,[10] at the end of which Loveman decided to remain with the company.[12]

Putting the Service-Profit Chain to Work

-

-

blog.deming.org blog.deming.org

-

the most important figures that one needs for management are unknown or unknowable (Lloyd S. Nelson, director of statistical methods for the Nashua corporation), but successful management must nevertheless take account of them.

分清楚哪些是能知道的,哪些是不能知道的数据

Tags

Annotators

URL

-

-

www.force11.org www.force11.org

-

From Bits to Narratives: The Rapid Evolution of Data Visualization Engines

It was an amazing presentation by Mr Cesar A Hidalgo, It was an eye opener for me in the area of data visualisation, As the national level organisation, we have huge data, but we never thought about data visualisation. You projects particularly pantheon and immersion is marvelous and I came to know that, you are using D3. It is a great job

-

-

www.swissinfo.ch www.swissinfo.ch

-

Around 40% of Swiss research is open access

-

-

www.insidehighered.com www.insidehighered.com

-

The entirely quantitative methods and variables employed by Academic Analytics -- a corporation intruding upon academic freedom, peer evaluation and shared governance -- hardly capture the range and quality of scholarly inquiry, while utterly ignoring the teaching, service and civic engagement that faculty perform,

-

-

datascience.codata.org datascience.codata.org

-

What is missing and trends.

Cough GigaScience Cough. See integrated GigaDB repo http://database.oxfordjournals.org/content/2014/bau018.full

-

- Apr 2016

-

googleguacamole.wordpress.com googleguacamole.wordpress.com

-

socialboost.com.ua socialboost.com.ua

-

SocialBoost — is a tech NGO that promotes open data and coordinates the activities of more than 1,000 IT-enthusiasts, biggest IT-companies and government bodies in Ukraine through hackathons for socially meaningful IT-projects, related to e-government, e-services, data visualization and open government data. SocialBoost has developed dozens of public services, interactive maps, websites for niche communities, as well as state projects such as data.gov.ua, ogp.gov.ua. SocialBoost builds the bridge between civic activists, government and IT-industry through technology. Main goal is to make government more open by crowdsourcing the creation of innovative public services with the help of civic society.

Tags

Annotators

URL

-

-

mitpress.mit.edu mitpress.mit.edu

-

Great Principles of Computing<br> Peter J. Denning, Craig H. Martell

This is a book about the whole of computing—its algorithms, architectures, and designs.

Denning and Martell divide the great principles of computing into six categories: communication, computation, coordination, recollection, evaluation, and design.

"Programmers have the largest impact when they are designers; otherwise, they are just coders for someone else's design."

-

-

techcrunch.com techcrunch.com

-

We should have control of the algorithms and data that guide our experiences online, and increasingly offline. Under our guidance, they can be powerful personal assistants.

Big business has been very militant about protecting their "intellectual property". Yet they regard every detail of our personal lives as theirs to collect and sell at whim. What a bunch of little darlings they are.

-

-

oerresearchhub.org oerresearchhub.org

-

OER Data Report

-

-

dauwhe.github.io dauwhe.github.io

-

Is it possible to add information to a resource without touching it?

That’s something we’ve been doing, yes.

-

-

wiki.surfnet.nl wiki.surfnet.nl

-

preferably

Delete "preferably". Limiting the scope of text mining to exclude societal and commercial purposes limits the usefulness to enterprises (especially SMEs that cannot mine on their own) as well as to society. These limitations have ramifications in terms of limiting the research questions that researchers can and will pursue.

-

Encourage researchers not to transfer the copyright on their research outputs before publication.

This statement is more generally applicable than just to TDM. Besides, "Encourage" is too weak a word here, and from a societal perspective, it would be far better if researchers were to retain their copyright (where it applies), but make their copyrightable works available under open licenses that allow publishers to publish the works, and others to use and reuse it.

-

-

gigadb.org gigadb.org

-

To maximize its utility

The unusual data released strategy involving crowdsourcing on twitter, is discussed in more detail in this blog http://blogs.biomedcentral.com/gigablog/2011/08/03/notes-from-an-e-coli-tweenome-lessons-learned-from-our-first-data-doi/

Tags

Annotators

URL

-

-

thenewinquiry.com thenewinquiry.comFitted1

-

In December 2014, FitBit released a pledge stating that it “is deeply committed to protecting the security of your data.” Still, we may soon be obliged to turn over the sort of information the device is designed to collect in order to obtain medical coverage or life insurance. Some companies currently offer incentives like discounted premiums to members who volunteer information from their activity trackers. Many health and fitness industry experts say it is only a matter of time before all insurance providers start requiring this information.

-

-

gigadb.org gigadb.org

-

Related manuscripts:

See also this population genomics study in Nature Genetics that uses this data: http://www.nature.com/ng/journal/v45/n1/full/ng.2494.html See also this blog posting on data citation of this data (and related problems): http://blogs.biomedcentral.com/gigablog/2012/12/21/promoting-datacitation-in-nature/

Tags

Annotators

URL

-

-

www.nature.com www.nature.com

-

Accession codes

The panda and polar bear datasets should have been included in the data section rather than hidden in the URLs section. Production removed the DOIs and used (now dead) URLs instead, but for the working links and insight see the following blog: http://blogs.biomedcentral.com/gigablog/2012/12/21/promoting-datacitation-in-nature/

-

-

gigadb.org gigadb.org

-

doi:10.1016/j.cell.2014.03.054

More on the backstory and other papers using and citing this data before the Cell publication in ths blog posting: http://blogs.biomedcentral.com/gigablog/2014/05/14/the-latest-weapon-in-publishing-data-the-polar-bear/

Tags

Annotators

URL

-

-

gigadb.org gigadb.org

-

To date 5'-cytosine methylation (5mC) has not been reported in Caenorhabditis elegans, and using ultra-performance liquid chromatography/tandem mass spectrometry (UPLC-MS/MS) the existence of DNA methylation in T. spiralis was detected, making it the first 5mC reported in any species of nematode.

As a novel and potentially controversial finding, the huge amounts of supporting data are depositedhere to assist others to follow on and reproduce the results. This won the BMC Open Data Prize, as the judges were impressed by the numerous extra steps taken by the authors in optimizing the openness and easy accessibility of this data, and were keen to emphasize that the value of open data for such breakthrough science lies not only in providing a resource, but also in conferring transparency to unexpected conclusions that others will naturally wish to challenge. You can see more in the blog posting and interview with the authors here: http://blogs.biomedcentral.com/gigablog/2013/10/02/open-data-for-the-win/

Tags

Annotators

URL

-

-

biosharing.org biosharing.org

-

Giga Science Database

For more about GigaDB, see the paper in Database Journal: http://database.oxfordjournals.org/content/2014/bau018.full

Tags

Annotators

URL

-

-

edtechdigest.wordpress.com edtechdigest.wordpress.com

-

The New Politics of Educational Data

-

- Mar 2016

-

www.jonbecker.net www.jonbecker.net

-

Ranty Blog Post about Big Data, Learning Analytics, & Higher Ed

-

-

medium.com medium.com

-

There is a human story behind every data point and as educators and innovators we have to shine a light on it.

-

-

www.ncbi.nlm.nih.gov www.ncbi.nlm.nih.gov

-

three-dimensional inversion recovery-prepped spoiled grass coronal series

ID: BPwPsyStructuralData SubjectGroup: BPwPsy Acquisition: Anatomical DOI: 10.18116/C6159Z

ID: BPwoPsyStructuralData SubjectGroup: BPwoPsy Acquisition: Anatomical DOI: 10.18116/C6159Z

ID: HCStructuralData SubjectGroup: HC Acquisition: Anatomical DOI: 10.18116/C6159Z

ID: SZStructuralData SubjectGroup: SZ Acquisition: Anatomical DOI: 10.18116/C6159Z

-

-

opentextbc.ca opentextbc.ca

-

Open data

Sadly, there may not be much work on opening up data in Higher Education. For instance, there was only one panel at last year’s international Open Data Conference. https://www.youtube.com/watch?v=NUtQBC4SqTU

Looking at the interoperability of competency profiles, been wondering if it could be enhanced through use of Linked Open Data.

-

-

amstat.tandfonline.com amstat.tandfonline.com

-

American Statistical Association statement on p-values

-

-

www.genomeweb.com www.genomeweb.com

-

right to privacy, while allowing them to make an informed choice about taking reasonable risks to their privacy in order to help advance research

-

- Feb 2016

-

-

As Big-Data Companies Come to Teaching, a Pioneer Issues a Warning

-

-

www.whitehouse.gov www.whitehouse.gov

-

federally funded research publicly accessible are becoming the norm

-

-

blog.databaseanimals.com blog.databaseanimals.com

-

I read my first books on data mining back in the early 1990's and one thing I read was that "80% of the effort in a data mining project goes into data cleaning."

-

-

www.techdirt.com www.techdirt.com

-

"It comes down to what is the reason for our existence? It's to accelerate science, not to make money."

-

-

leanpub.com leanpub.com

-

Books on data science and R programming by Roger D. Peng of Johns Hopkins.

-

-

blog.cloudera.com blog.cloudera.com

-

Great explanation of 15 common probability distributions: Bernouli, Uniform, Binomial, Geometric, Negative Binomial, Exponential, Weibull, Hypergeometric, Poisson, Normal, Log Normal, Student's t, Chi-Squared, Gamma, Beta.

-

-

f1000research.com f1000research.com

-

Since its start in 1998, Software Carpentry has evolved from a week-long training course at the US national laboratories into a worldwide volunteer effort to improve researchers' computing skills. This paper explains what we have learned along the way, the challenges we now face, and our plans for the future.

http://software-carpentry.org/lessons/<br> Basic programming skills for scientific researchers.<br> SQL, and Python, R, or MATLAB.

http://www.datacarpentry.org/lessons/<br> Managing and analyzing data.

Tags

Annotators

URL

-

- Jan 2016

-

www.readability.com www.readability.com

-

The journal will accommodate data but should be presented in the context of a paper. The Winnower should not act as a forum for publishing data sets alone. It is our feeling that data in absence of theory is hard to interpret and thus may cause undue noise to the site.

This will be the case also for the data visualizations showed here, once the data is curated and verified properly. Still data visualizations can start a global conversation without having the full paper translated to English.

Tags

Annotators

URL

-

-

courses.csail.mit.edu courses.csail.mit.edu

-

50 Years of Data Science, David Donoho<br> 2015, 41 pages

This paper reviews some ingredients of the current "Data Science moment", including recent commentary about data science in the popular media, and about how/whether Data Science is really di fferent from Statistics.

The now-contemplated fi eld of Data Science amounts to a superset of the fi elds of statistics and machine learning which adds some technology for 'scaling up' to 'big data'.

-

-

www.stm-assoc.org www.stm-assoc.org

-

The explosion of data-intensive research is challenging publishers to create new solutions to link publications to research data (and vice versa), to facilitate data mining and to manage the dataset as a potential unit of publication. Change continues to be rapid, with new leadership and coordination from the Research Data Alliance (launched 2013): most research funders have introduced or tightened policies requiring deposit and sharing of data; data repositories have grown in number and type (including repositories for “orphan” data); and DataCite was launched to help make research data cited, visible and accessible. Meanwhile publishers have responded by working closely with many of the community-led projects; by developing data deposit and sharing policies for journals, and introducing data citation policies; by linking or incorporating data; by launching some pioneering data journals and services; by the development of data discovery services such as Thomson Reuters’ Data Citation Index (page 138).

-

-

www.whitehouse.gov www.whitehouse.gov

-

It doesn’t work if we think the people who disagree with us are all motivated by malice, or that our political opponents are unpatriotic. Democracy grinds to a halt without a willingness to compromise; or when even basic facts are contested, and we listen only to those who agree with us.

C'mon, civic technologists, government innovators, open data advocates: this can be a call to arms. Isn't the point of "open government" to bring people together to engage with their leaders, provide the facts, and allow more informed, engaged debate?

-

-

quoracast.quora.com quoracast.quora.com

-

"A friend of mine said a really great phrase: 'remember those times in early 1990's when every single brick-and-mortar store wanted a webmaster and a small website. Now they want to have a data scientist.' It's good for an industry when an attitude precedes the technology."

-

-

wilkelab.org wilkelab.org

-

UT Austin SDS 348, Computational Biology and Bioinformatics. Course materials and links: R, regression modeling, ggplot2, principal component analysis, k-means clustering, logistic regression, Python, Biopython, regular expressions.

-

-

phys.org phys.org

-

paradox of unanimity - Unanimous or nearly unanimous agreement doesn't always indicate the correct answer. If agreement is unlikely, it indicates a problem with the system.

Witnesses who only saw a suspect for a moment are not likely to be able to pick them out of a lineup accurately. If several witnesses all pick the same suspect, you should be suspicious that bias is at work. Perhaps these witnesses were cherry-picked, or they were somehow encouraged to choose a particular suspect.

-

-

rpy2.readthedocs.org rpy2.readthedocs.org

-

Python interface to the R programming language.<br> Use R functions and packages from Python.<br> https://pypi.python.org/pypi/rpy2

-

-

matthewlincoln.net matthewlincoln.net

-

Guidelines for publishing GLAM data (galleries, libraries, archives, museums) on GitHub. It applies to publishing any kind of data anywhere.

- Document the schema of the data.

- Make the usage terms and conditions clear.

- Tell people how to report issues.<br> Or, tell them that they're on their own.

- Tell people whether you accept pull requests (user-contributed edits and additions), and how.

- Tell people how often the data will be updated, even if the answer is "sporadically" or "maybe never".

https://en.wikipedia.org/wiki/Open_Knowledge<br> http://openglam.org/faq/

-

-

manual.calibre-ebook.com manual.calibre-ebook.com

-

Set Semantics¶ This tool is used to set semantics in EPUB files. Semantics are simply, links in the OPF file that identify certain locations in the book as having special meaning. You can use them to identify the foreword, dedication, cover, table of contents, etc. Simply choose the type of semantic information you want to specify and then select the location in the book the link should point to. This tool can be accessed via Tools->Set semantics.

Though it’s described in such a simple way, there might be hidden power in adding these tags, especially when we bring eBooks to the Semantic Web. Though books are the prime example of a “Web of Documents”, they can also contribute to the “Web of Data”, if we enable them. It might take long, but it could happen.

-

- Dec 2015

-

rainystreets.wikity.cc rainystreets.wikity.cc

-

The idea was to pinpoint the doctors prescribing the most pain medication and target them for the company’s marketing onslaught. That the databases couldn’t distinguish between doctors who were prescribing more pain meds because they were seeing more patients with chronic pain or were simply looser with their signatures didn’t matter to Purdue.

-

-

www.edsurge.com www.edsurge.com

-

Users publish coursework, build portfolios or tinker with personal projects, for example.

Useful examples. Could imagine something like Wikity, FedWiki, or other forms of content federation to work through this in a much-needed upgrade from the “Personal Home Pages” of the early Web. Do see some connections to Sandstorm and the new WordPress interface (which, despite being targeted at WordPress.com users, also works on self-hosted WordPress installs). Some of it could also be about the longstanding dream of “keeping our content” in social media. Yes, as in the reverse from Facebook. Multiple solutions exist to do exports and backups. But it can be so much more than that and it’s so much more important in educational contexts.

-

-

-

(Not surprisingly, none of the bills provide for funding to help schools come up to speed.)

-

-

bavatuesdays.com bavatuesdays.com

-

A personal API builds on the domain concept—students store information on their site, whether it’s class assignments, financial aid information or personal blogs, and then decide how they want to share that data with other applications and services. The idea is to give students autonomy in how they develop and manage their digital identities at the university and well into their professional lives

-

-

mfeldstein.com mfeldstein.com

-

sufficiently rich information

-

who owns the data

-

It’s educators who come up with hypotheses and test them using a large data set.

And we need an ever-larger data set, right?

-

-

bits.blogs.nytimes.com bits.blogs.nytimes.com

-

nearly $8 billion prekindergarten through 12th-grade education technology software market

-

-

hackeducation.com hackeducation.com

-

As usual, @AudreyWatters puts things in proper perspective.

-

-

www.theatlantic.com www.theatlantic.com

-

“What we’re seeing is that the general public wants to read scholarly papers.”

-

-

mfeldstein.com mfeldstein.com

-

increased investment in professional development and teaching-friendly tenure and promotion practices

Even those who adopt a taylorist model to education may understand that “it takes money to save money”.

-

-

code.facebook.com code.facebook.com

-

Big Sur is our newest Open Rack-compatible hardware designed for AI computing at a large scale. In collaboration with partners, we've built Big Sur to incorporate eight high-performance GPUs

-

-

support.vitalsource.com support.vitalsource.com

-

The EDUPUB Initiative VitalSource regularly collaborates with independent consultants and industry experts including the National Federation of the Blind (NFB), American Foundation for the Blind (AFB), Tech For All, JISC, Alternative Media Access Center (AMAC), and others. With the help of these experts, VitalSource strives to ensure its platform conforms to applicable accessibility standards including Section 508 of the Rehabilitation Act and the Accessibility Guidelines established by the Worldwide Web Consortium known as WCAG 2.0. The state of the platform's conformance with Section 508 at any point in time is made available through publication of Voluntary Product Accessibility Templates (VPATs). VitalSource continues to support industry standards for accessibility by conducting conformance testing on all Bookshelf platforms – offline on Windows and Macs; online on Windows and Macs using standard browsers (e.g., Internet Explorer, Mozilla Firefox, Safari); and on mobile devices for iOS and Android. All Bookshelf platforms are evaluated using industry-leading screen reading programs available for the platform including JAWS and NVDA for Windows, VoiceOver for Mac and iOS, and TalkBack for Android. To ensure a comprehensive reading experience, all Bookshelf platforms have been evaluated using EPUB® and enhanced PDF books.

Could see a lot of potential for Open Standards, including annotations. What’s not so clear is how they can manage to produce such ePub while maintaining their DRM-focused practice. Heard about LCP (Lightweight Content Protection). But have yet to get a fully-accessible ePub which is also DRMed in such a way.

-

-

-

add tags for categorization and search

Well-structured annotations can pave the way towards Linked Open Data.

-

-

iso-sc36.auf.org iso-sc36.auf.org

-

tout enregistrement MLR conforme au profil Normetic 2.0 est automatiquement conforme au profil d’application MLR de base.

L’interopérabilité est essentielle à l’avènement du Web des données liées (en éducation comme ailleurs).

-

-

math.mit.edu math.mit.eduCT4S.pdf1

-

Data gathering is ubiquitous in science. Giant databases are currently being minedfor unknown patterns, but in fact there are many (many) known patterns that simplyhave not been catalogued. Consider the well-known case of medical records. A patient’smedical history is often known by various individual doctor-offices but quite inadequatelyshared between them. Sharing medical records often means faxing a hand-written noteor a filled-in house-created form between offices.

-

-

blogs.edweek.org blogs.edweek.org

-

Textbooks Out of Step With Scientists on Climate Change, Study Says

-

-

www.sr.ithaka.org www.sr.ithaka.org

-

As of May 1, 2015, there is a new requirement from some research councils that research data must also be openly available,

data requirements

-

-

www.meanboyfriend.com www.meanboyfriend.com

-

Among the most useful summaries I have found for Linked Data, generally, and in relationship to libraries, specifically. After first reading it, got to hear of the acronym LODLAM: “Linked Open Data for Libraries, Archives, and Museums”. Been finding uses for this tag, in no small part because it gets people to think about the connections between diverse knowledge-focused institutions, places where knowledge is constructed. Somewhat surprised academia, universities, colleges, institutes, or educational organisations like schools aren’t explicitly tied to those others. In fact, it’s quite remarkable that education tends to drive much development in #OpenData, as opposed to municipal or federal governments, for instance. But it’s still very interesting to think about Libraries and Museums as moving from a focus on (a Web of) documents to a focus on (a Web of) data.

-

- Nov 2015

-

news.mit.edu news.mit.edu

-

The effectiveness of infographics, or any other form of communication, can be measured in terms of whether people:

- pay attention to it

- understand it

- remember it later

Titles are important. Ideally, the title should concisely state the main point you want people to grasp.

Recall of both labels and data can be improved by using redundancy -- text as well as images. For example:

- flags in addition to country names

- proportional bubbles in addition to numbers.

-

-

chronicle.com chronicle.com

-

it apparently meant allowing students to see the syllabus before they register

There are initiatives to do much more than this, including using Open Data on syllabi to delve down into course content.

-

-

europa.eu europa.eu

-

That's why I say that data is the new oil for the digital age

-

-

www.randalolson.com www.randalolson.com

-

TPOT is a Python tool that automatically creates and optimizes machine learning pipelines using genetic programming. Think of TPOT as your “Data Science Assistant”: TPOT will automate the most tedious part of machine learning by intelligently exploring thousands of possible pipelines, then recommending the pipelines that work best for your data.

https://github.com/rhiever/tpot TPOT (Tree-based Pipeline Optimization Tool) Built on numpy, scipy, pandas, scikit-learn, and deap.

-

-

booktype.okfn.org booktype.okfn.org

-

Open Education Handbook 2014

All about open education

-

- Oct 2015

-

www.campuscomputing.net www.campuscomputing.net

-

The Coming of OERRelated to the enthusiasm for digital instructional resources,four-fifths (81percent) of the survey participants agreethat “Open Source textbooks/Open Education Resource(OER) content “will be an important source for instructional resources in five yea

-

-

web.hypothes.is web.hypothes.is

-

why not annotate, say, the Eiffel Tower itself

As long as it has some URI, it can be annotated. Any object in the world can be described through the Semantic Web. Especially with Linked Open Data.

-

If you deal with PDFs online, you’ve probably noticed that some are different from others. Some are really just images.

First step in Linked Open Data is moving away from image PDFs.

-

-

-

Open Research MOOC

-

-

www.iowastatedaily.com www.iowastatedaily.com

-

The second level of Open Access is Gold Open Access, which requires the author to pay the publishing platform a fee to have their work placed somewhere it can be accessed for free. These fees can range in the hundreds to thousands of dollars.

Not necessarily true. This is a misconception. "About 70 percent of OA journals charge no APCs at all. We’ve known this for a decade but it’s still widely overlooked by people who should know better." -Suber http://lj.libraryjournal.com/2015/09/opinion/not-dead-yet/an-interview-with-peter-suber-on-open-access-not-dead-yet/#_

-

- Sep 2015

-

docs.google.com docs.google.com

-

In a nutshell, an ontology answers the question, “What things can we say exist in a domain, and how do we describe those things that relate to each other?”

-

According to inventor of the World Wide Web, Tim Berners-Lee, there are four key principles of Linked Data (Berners-Lee, 2006): Use URIs to denote things. Use HTTP URIs so that these things can be referred to and looked up (dereferenced) by people and user agents. Provide useful information about the thing when its URI is dereferenced, leveraging standards such as RDF, SPARQL. Include links to other related things (using their URIs) when publishing data on the web.

-

In section 4.1.3.2 of the xAPI specification, it states “Activity Providers SHOULD use a corresponding existing Verb whenever possible.”

-

-

europepmc.org europepmc.org

-

This is problematic because the article has been influential in the literature supporting the use of antidepressants in adolescents.

Example of the type of harm that lack of transparency can lead to.

-

Access to primary data from trials has important implications for both clinical practice and research, including that published conclusions about efficacy and safety should not be read as authoritative. The reanalysis of Study 329 illustrates the necessity of making primary trial data and protocols available to increase the rigour of the evidence base.

How can anyone argue that science isn't served by making primary data available? We must recognize that more people are harmed by not sharing data than are harmed by data being shared.

-

-

www.sciencedirect.com www.sciencedirect.com

-

(B) Dyn labeling in dyn-IRES-cre x Ai9-tdTomato compared to in situ images from the Allen Institute for Brain Science in a sagittal section highlighting presence of dyn in the striatum, the hippocampus, BNST, amygdala, hippocampus, and substantia nigra. All images show tdTomato (red) and Nissl (blue) staining.(C) Coronal section highlighting dynorphinergic cell labeling in the NAc as compared to the Allen Institute for Brain Science.

Allen Brain Institute

-

-

www.sciencemag.org www.sciencemag.org

-

Because cue-evoked DA release developed throughout learning, we examined whether DA release correlated with conditioned-approach behavior. Figure 1E and table S1 show that the ratio of the CS-related DA release to the reward-related DA release was significantly (r2 = 0.68; P = 0.0005) correlated with number of CS nosepokes in a conditioning session (also see fig. S4).

single trial analysis

Tags

Annotators

URL

-

-

www.confluent.io www.confluent.io

-

This approach is called change data capture, which I wrote about recently (and implemented on PostgreSQL). As long as you’re only writing to a single database (not doing dual writes), and getting the log of writes from the database (in the order in which they were committed to the DB), then this approach works just as well as making your writes to the log directly.

Interesting section on applying log-orientated approaches to existing systems.

-

- Aug 2015

-

www.edudemic.com www.edudemic.com

-

Shared information

The “social”, with an embedded emphasis on the data part of knowledge building and a nod to solidarity. Cloud computing does go well with collaboration and spelling out the difference can help lift some confusion.

-

-

hypothes.is hypothes.is

-

publisher or museum

Potential for LODLAM!

-

-

www.w3.org www.w3.org

-

I feel that there is a great benefit to fixing this question at the spec level. Otherwise, what happens? I read a web page, I like it and I am going to annotate it as being a great one -- but first I have to find out whether the URI my browser is used, conceptually by the author of the page, to represent some abstract idea?

-

-

blogs.nature.com blogs.nature.com

-

data deposition is limited to researchers working at the same institution,

Not necessarily. For many institutions, as long as one of the researchers is affiliated, the data can be deposited

-

-

europepmc.org europepmc.org

-

Big data to knowledge (BD2K)

would like to know more about this term and HHS inititiative

-

the definition of a “dataset,”

this is interesting, and will be interesting to track within and across disciplines

-

Approximately 87% of the invisible datasets consist of data newly collected for the research reported; 13% reflect reuse of existing data. More than 50% of the datasets were derived from live human or non-human animal subjects.

Another good statistic to have

-

Among articles with invisible datasets, we found an average of 2.9 to 3.4 datasets, suggesting there were approximately 200,000 to 235,000 invisible datasets generated from NIH-funded research published in 2011.

This is a good statistic to have handy.

-

-

dublincore.org dublincore.org

-

Dataset

what about unstructured data?

Tags

Annotators

URL

-

- Jun 2015

-

-

The comparison between the model and the experts is based on the species distribution models (SMDs), not on actual species occurrences, so the observed difference could be due to weakness in the SDM predictions rather than the model outperforming the experts. The explanation for this choice in Footnote 4 is reasonable, but I wonder if it could be addressed by rarifying the sampling appropriately.

-

-

chronicle.com chronicle.com

-

If you can’t find the correct web page, ask a reference librarian.

YES, ASK US. Also, we love to work with faculty on managing their data!

-

-

-

possible with modern technology,

This is terrifying but also fascinating. Imagine the data for MFA programs on the content/style whatever on the last page readers thumbed before stopping the turning!

Also, couldn't this system be easily gamed: creating bots to "peruse" texts at the right pace repeatedly?

-

-

docs.gatesfoundation.org docs.gatesfoundation.org

-

G enerat ing student performance data that can help students, teachers, and parents identify areas for further teaching or practice

Data, data, data

-

-

nepanode.anl.gov nepanode.anl.gov

-

Critical Habitat - Terrestrial - Polygon [USFWS] Critical Habitat - Terrestrial - Line [USFWS]

Critical Habitat Layers need to be updated

-

- May 2015

-

www.theengineroom.org www.theengineroom.org

-

The book would need to be set up on a website first

Not necessarily, if PDF is in the mix, it can be the medium for annotations that might later anchor to a website -- even if PDFs are distributed to participants and used locally as mentioned above.

-

-

-

periods have proven to work poorly with Linked Data principles, which require well-defined entities for linking.

-

- Apr 2015

-

pywb-h.herokuapp.com pywb-h.herokuapp.com

-

There is now a strong body of evidence showing failure to comply with results-reporting requirements across intervention classes, even in the case of large, randomised trials [3–7]. This applies to both industry and investigator-driven trials. I

Compliance not mechanism

-

“the registration of all interventional trials is a scientific, ethical, and moral responsibility”

World Health Organization's statement

-

-

europepmc.org europepmc.org

-

Anyone withholding the methods and results of a clinical trial is already in breach of multiple codes and regulations, including the Declaration of Helsinki, various promises from industry and professional bodies, and, in many cases, the United States Food and Drug Administration (FDA) Amendment Act of 2007. Indeed, a recently published cohort study of trials in clinicaltrials.gov found that more than half had failed to post results; and even though the FDA is entitled to issue fines of $10,000 a day for transgressions, no such fines have ever been levied [3].

Sticks don't work if they aren't used. I find this rather disturbing.

-

The best currently available evidence shows that the methods and results of clinical trials are routinely withheld from doctors, researchers, and patients [2–5], undermining our best efforts at informed decision making.

Tags

Annotators

URL

-

-

www.badscience.net www.badscience.net

-

This week there was an amazing landmark announcement from the World Health Organisation: they have come out and said that everyone must share the results of their clinical trials, within 12 months of completion, including old trials (since those are the trials conducted on currently used treatments).

-

-

www.ncbi.nlm.nih.gov www.ncbi.nlm.nih.gov

-

First, the domain is a poor candidate because the domain of all entities relevant to neurobiological function is extremely large, highly fragmented into separate subdisciplines, and riddled with lack of consensus (Shirky, 2005).

Probably a good thing to add to the Complex Data integration workshop write up

-

-

dmm.biologists.org dmm.biologists.org

-

Wouldn’t it be useful, both to the scientific community or the wider world, to increase the publication of negative results?

Tags

Annotators

URL

-

- Mar 2015

-

flowingdata.com flowingdata.com

-

the future of visualization

really true!

show data variations, not design variations

-

-

iopscience.iop.org iopscience.iop.orgC:1

-

Geneva group “high” mass-loss evolutionary tracks

Is there a http link for these evolutionary models?

-

- Feb 2015

-

wiki.chn.io wiki.chn.io

-

Num / Num summarizes a graph with nodes / arcs.

The underlying graph model is not explicitly mentionned here nor in the README of the plugin.

-

-

go.coverity.com go.coverity.com

-

the critical role that big data open source projects play in the Internet of Things (IoT).

-

- Jan 2015

-

-

Make no mistake, in today's digital age, we are most definitely "renters" with virtually no rights—including rights to our data.

-

The Internet of Things promises to create mountains upon mountains of data, but none of it will be yours.

-

-

newleftreview.org newleftreview.org

-

The big question, of course, is whether that player has to be a private capitalist corporation, or some federated, publicly-run set of services that could reach a data-sharing agreement free of monitoring by intelligence agencies.

So there we are. It is pretty straight forward really.

-

But if you turn data into a money-printing machine for citizens, whereby we all become entrepreneurs, that will extend the financialization of everyday life to the most extreme level, driving people to obsess about monetizing their thoughts, emotions, facts, ideas—because they know that, if these can only be articulated, perhaps they will find a buyer on the open market. This would produce a human landscape worse even than the current neoliberal subjectivity. I think there are only three options. We can keep these things as they are, with Google and Facebook centralizing everything and collecting all the data, on the grounds that they have the best algorithms and generate the best predictions, and so on. We can change the status of data to let citizens own and sell them. Or citizens can own their own data but not sell them, to enable a more communal planning of their lives. That’s the option I prefer.

Very well thought out. Obviously must know about read write web, TSL certificate issues etc. But what does neoliberal subjectivity mean? An interesting phrase.

-

- Dec 2014

-

www.openthesaurus.de www.openthesaurus.de

Tags

Annotators

URL

-

-

publishing.aip.org publishing.aip.org

-

This is the redirected link for the "Physics Auxiliary Publication Service". But there is no data here.

-

- Nov 2014

-

www.hackeducation.com www.hackeducation.com

-

If we believe in equality, if we believe in participatory democracy and participatory culture, if we believe in people and progressive social change, if we believe in sustainability in all its environmental and economic and psychological manifestations, then we need to do better than slap that adjective “open” onto our projects and act as though that’s sufficient or — and this is hard, I know — even sound.

-

that the moments when students generate “education data” is, historically, moments when they come into contact with the school and more broadly the school and the state as a disciplinary system

-

- May 2014

-

www-group.slac.stanford.edu www-group.slac.stanford.edu

-

SSPP # 7.2 Power Usage Effectiveness (PUE) (Electronic Maximum annual weighted average PUE of 1.4 by FY15 )

SLAC target PUE of 1.4 by FY15

-

-

blogs.berkeley.edu blogs.berkeley.edu

-

Google’s ultra-efficient data centers, with a PUE of 1.12, are beating the PUE curve by miles.

Google's PUE is 1.12

-

-

www.i2sl.org www.i2sl.org

-

When the project is complete later this year (all done while the existing data center remained in operation!), the data center's annual PUE will drop from 1.5 to 1.2, saving 20 percent of its annual electrical cost.

Warren Hall target efficiency: 1.2 as of 2011

-

-

www.mghpcc.org www.mghpcc.org

-

The MGHPCC is targeting a PUE of less than 1.3. A recent report cites typical data center PUEs at 1.9. This means that our facility can expect to

Target of 1.3 (vs typical data centers around 1.9) PUE

-

- Apr 2014

-

www.dbms2.com www.dbms2.com

-

Mike Olson of Cloudera is on record as predicting that Spark will be the replacement for Hadoop MapReduce. Just about everybody seems to agree, except perhaps for Hortonworks folks betting on the more limited and less mature Tez. Spark’s biggest technical advantages as a general data processing engine are probably: The Directed Acyclic Graph processing model. (Any serious MapReduce-replacement contender will probably echo that aspect.) A rich set of programming primitives in connection with that model. Support also for highly-iterative processing, of the kind found in machine learning. Flexible in-memory data structures, namely the RDDs (Resilient Distributed Datasets). A clever approach to fault-tolerance.

Spark's advantages:

- DAG processing model