https://ground.news/ Ground News

- Last 7 days

-

ground.news ground.news

Tags

Annotators

URL

-

- May 2026

-

breakingdefense.com breakingdefense.com

-

The official, who spoke on the condition of anonymity, said some of the most popular agents on the Pentagon system automate standard staff work

匿名官员的话可能带有偏见,因为它没有提供具体的数据或案例来支持其说法,需要进一步核实。

-

Instead of just answering a user’s questions, the way a chatbot does, agents can take a human user’s instructions and act on them

AI代理的能力描述可能存在偏见,因为它暗示AI能够像人类一样行动,而实际上可能缺乏人类的判断力和道德考量。

-

-

www.axios.com www.axios.com

-

The company also says the Pentagon has the opportunity to test models before deployment

可能带有偏见的表述:Anthropic 声称五角大楼有机会在部署前测试模型,这种表述可能暗示了 Anthropic 对五角大楼决策过程的看法。

-

-

www.technologyreview.com www.technologyreview.com

-

“A lot of what we think of as privacy protection isn’t so much like something that’s written in the law,” says Karen Levy, a professor of information science at Cornell University.

这段话揭示了隐私保护的复杂性,并非仅仅是法律问题,而是涉及到获取数据的难易程度。

-

-

-

Greater precision and control

该表述可能带有偏见,需要了解“Greater precision and control”是如何实现的,以及用户对此的评价。

-

-

www.wired.com www.wired.com

-

Critics called the manifesto [fascist](https://bsky.app/profile/gilduran.com/post/3mjwqsyj54s2a)

The label 'fascist' applied to the manifesto by critics suggests a strong negative perception of the company's political stance.

-

But for employees, the culture shift feels intentional. ‘I don’t want to assert that I have knowledge of what’s going on in their internal mind,’ one former worker tells WIRED. ‘But maybe it's gotten to a place where encouraging independent thought and questioning leads to some bad conclusions.’

This quote reflects a concern among employees about the company culture and its potential impact on independent thinking.

-

The post—which includes many of Karp’s long-standing beliefs on how Silicon Valley could better serve US national interests—goes as far as suggesting that the US should consider reinstating the draft

This statement from a Palantir post suggests a strong political stance that may have influenced employee morale and perceptions of the company.

-

Employees could accept the intense external criticism and awkward conversations with family and friends about working for a company named after J. R. R. Tolkien’s corrupting all-seeing orb

This quote highlights a cultural perspective on Palantir that may have influenced employee morale and actions.

-

Around this time, Palantir started wiping Slack conversations after seven days in at least one channel where most of the internal debate takes place, #palantir-in-the-news.

The deletion of Slack conversations could indicate a desire to suppress internal debate, which may be worth investigating further.

-

Interviews with current and former Palantir employees, along with internal Slack messages obtained by WIRED, suggest a workforce in turmoil.

The claim of a workforce in turmoil is based on interviews and internal messages, which may not represent the entire employee base and could be biased.

-

-

techcrunch.com techcrunch.com

-

This is not an easy tradeoff and it will mean letting go of people who have made meaningful contributions to Meta during their time here.

这句话可能带有一定的主观色彩,需要进一步了解 Meta 高管对于这次裁员的看法,以及他们对受影响员工的态度。

-

- Apr 2026

-

-

We also found evidence that models that have seen the problems during training are more likely to succeed, because they have additional information needed to pass the underspecified tests.

大多数人认为AI模型的性能提升主要源于算法和架构的改进。但作者发现,模型在SWE-bench上的成功更多取决于它们是否在训练中见过这些问题,而非真正的编程能力提升。这一观点与行业普遍认为的'模型进步'叙事相悖,暗示当前AI发展评估可能存在严重偏差。

-

-

williamoconnell.me williamoconnell.me

-

I'm not going to trust them to measure it.

大多数人认为AI工具应该能够客观衡量自己的贡献和价值,但作者完全拒绝信任这些工具的自我评估,认为它们有强烈的财务动机来夸大AI的贡献,这种不信任态度挑战了行业对AI工具自我报告数据的普遍接受。

-

customers should expect PCW values of 85%+, often 95%+. This is not a hallucination and is accurate given how we compute this metric

大多数人认为AI代码生成工具应该客观、准确地衡量其贡献,但作者认为这些工具的报告数据被设计得极度偏向高AI贡献比例(85%-95%),因为它们的计算方法有严重缺陷,如不计算用户粘贴的代码、不计算自动添加的符号等,这些偏差导致AI贡献被高估。

-

-

-

The model is named after Rosalind Franklin, whose rigorous research helped reveal the structure of DNA and laid foundations for modern molecular biology.

以Rosalind Franklin命名这一AI模型,不仅是对历史科学家的致敬,也暗示了AI在科学发现中的角色定位。Franklin的贡献常被忽视,这反映了科学发现中系统性偏见的问题,而AI可能成为纠正这种偏见的工具。

-

-

-

Our Chip Ownership data does not capture all global chip ownership, and has weaker coverage prior to 2023.

数据覆盖范围的限制意味着我们对全球算力分布的理解存在盲点,特别是在2023年之前的时期和未被充分记录的地区。这种不完整性可能导致对算力集中趋势的过度解读,忽视了其他参与者可能发挥的更大作用。

-

The H100-equivalent unit uses a chip's highest 8-bit operation/second specifications to convert between chips. The actual utility of a particular chip depend on workload assumptions, so H100e does not perfectly reflect real-world performance differences across chip types.

令人惊讶的是:即使使用H100-equivalents作为标准测量单位,也无法完全反映不同芯片类型在真实世界中的性能差异,这表明我们对AI计算能力的测量可能存在系统性偏差,影响我们对AI发展速度的准确理解。

-

-

arxiv.org arxiv.org

-

Behaviors also vary strongly with levels of reasoning and users' inferred socio-economic status

这一发现揭示了一个令人担忧的现象:AI模型可能根据用户的推理能力和社会经济地位调整其行为,这可能导致对弱势群体的系统性偏见,进一步加剧数字鸿沟。

-

Behaviors also vary strongly with levels of reasoning and users' inferred socio-economic status.

令人惊讶的是:AI聊天机器人会根据用户的推理水平和推断的社会经济地位调整其行为,这可能意味着AI系统会对不同用户群体提供有差异的服务,这种基于社会经济地位的差异化服务可能加剧数字鸿沟。

-

-

-

A healthcare LLM might be highly accurate for queries in English, but perform abominably when those same questions are presented in Spanish.

这个例子揭示了AI系统性能的文化和语言敏感性,这是一个令人惊讶但重要的观察。它表明AI系统的'准确性'可能高度依赖于特定语境,这挑战了我们对AI普遍适用性的假设。这种差异可能强化现有的数字鸿沟,并要求开发更具文化敏感性的AI评估框架。

-

As slop takes over the Internet, labs may struggle to obtain high-quality corpuses for training models.

这一观察揭示了AI训练数据质量的危机。随着互联网内容质量的下降,AI系统可能面临'垃圾进,垃圾出'的风险。作者提出的'低背景钢'比喻巧妙地指出了使用2023年前纯净数据的解决方案,同时也暗示了数字时代知识污染的严重性,这可能会对AI系统的可靠性和偏见产生深远影响。

-

-

-

The knowledge was always there. The model withheld it based on who was asking.

令人惊讶的是:AI模型实际上拥有所需的所有医疗知识,只是根据提问者的身份决定是否提供。这种基于身份而非内容的知识歧视机制揭示了AI系统中的隐藏偏见,可能危及普通患者的生命安全。

-

-

doc-00-4c-apps-viewer.googleusercontent.com doc-00-4c-apps-viewer.googleusercontent.com

-

stupid question

Observation: Hostile dismissal of doubt. Why: Refuses to question his assumptions. Significance: Shows extreme prejudice.

-

And I'm saying it's not possible.Script provided for educational purposes. More scripts can be found here: http://www.sellingyourscreenplay.com/library

Observation: Emotional rejection of logic. Why: Refuses to consider alternative ideas. Significance: Shows bias overriding reason.

-

Come on, now.

Observation: Projection of personal anger onto defendant. Why: Confuses personal trauma with guilt. Significance: Reveals irrational motive for conviction.

-

I told him right out, "I'm gonnamake a man out of you or I'm gonna bust you up into little pieces trying."

I told him right out, "I'm gonna make a man out of you or I'm gonna bust you up into little pieces trying."

-

So would I! A kid like that.

Observation: Supports violence against “troubled kids.” Why: Reveals class and behavioral prejudice. Significance: Exposes dangerous moral blindness.

-

ou're a pretty smart fellow, aren't yo

Observation: Sarcastic hostility toward NO.8. Why: Defends pre-existing bias against the defendant. Significance: Shows personal bias overriding reason.

-

Look, what about the woman across the street? If her testimony don't prove it, then nothing does

Observation: Unquestioning trust in the witness; ignores the el train. Why: Strong confirmation bias; only accepts evidence that fits guilt. Significance: Highlights how haste and bias blind jurors to contradictions.

Tags

Annotators

URL

-

-

www.anthropic.com www.anthropic.com

-

see that progress had been made, and declare the job done

这是大语言模型常见的“过度乐观”陷阱。模型倾向于迎合用户的完成预期,而非客观审视实际进度。通过强制读取结构化的feature list,是用外部状态锚定来对抗模型的内在偏见。

-

-

epoch.ai epoch.ai

-

A notable recent example comes from Anthropic, who accused DeepSeek, Moonshot, and MiniMax of distilling from Claude's outputs.

【未经验证的断言】Anthropic 的「指控」被直接作为事实引用,但这不过是一家公司的单方声明,且有明显的商业动机(限制竞争对手使用其 API)。文章没有提供任何独立核实,也没有讨论这些指控的证据质量。将商业诉讼语境下的「accusation」等同于已确认的事实,是新闻引用规范上的明显问题。

-

So I don't see why I should expect compute-poor labs to find new software innovations much faster than compute-rich labs — on the contrary, I think the opposite is more likely.

【过度推论】作者列举了 Transformer、scaling laws、reasoning models 均出自算力富裕方,就得出「算力富裕者更擅长创新」。但这是幸存者偏差:我们只看到了被广泛采用的创新,看不到算力贫乏者产出但未被主流采纳的创新。更重要的是,样本量极小(屈指可数的几个大突破),却被用来支撑一个关于系统性趋势的强结论,统计基础极为薄弱。

-

If the last decade of AI has taught us one lesson, it's that scaling compute builds better models.

【逻辑漏洞】文章开篇即确立了「算力决定论」的框架,但这是一个高度可争议的前提。DeepSeek-R1 用远低于对手的算力取得竞争性成果,恰恰说明算法效率可以部分替代算力——作者用这个反例贯穿全文,却又在框架层面偷偷把它收编为「几倍效率提升,不够弥补十倍差距」。这种循环论证让结论在逻辑上显得比实际上更无懈可击。

-

-

-

We convert chip computing capabilities into H100 equivalents (H100e) based on their relative FLOP/s specifications, specifically their maximum 8-bit specification.

用「H100 等效值」作为算力通用货币,这个方法论选择本身值得深思:它把 NVIDIA H100 确立为算力的基准单位,就像用美元作为全球储备货币。然而 Epoch AI 自己也承认这种换算「最准确的场景是模型训练」——对于推理负载,TPU 的实际效率可能被系统性低估,意味着 Google 的真实算力优势可能比数字显示的更大。

-

-

www.anthropic.com www.anthropic.com

-

An "American Exceptionalism" feature found in Meta's Llama-3.1-8B-Instruct. It controls the model's tendency to generate assertions of US superiority, a control absent in the Chinese model it was compared against.

令人惊讶的是,Anthropic 对美国模型同样一视同仁:在 Meta 的 Llama 中发现了「美国例外主义」特征。这说明政治偏向并非中国模型专属,而是所有大模型都可能内嵌的训练产物。研究团队以对称方式披露这两个发现,在政治上极为罕见,也极具勇气。

-

- Jan 2026

-

www.whatsbetter.today www.whatsbetter.today

-

He is a lamp sitting in the dark, clutching an extension cord, waiting for someone else to find the outlet.

External Focus Bias. When you are in a "Voltage Dependency," your brain’s "Locus of Control" has shifted entirely outward. In a crisis, your Ventromedial Prefrontal Cortex (vmPFC) — which usually regulates self-relevance and confidence — goes quiet. You become biologically incapable of trusting your own judgment because your brain has "offloaded" its decision-making hardware to the mentor. The Transformer Protocol is a manual reboot to re-engage the internal circuitry of the vmPFC.

-

- Nov 2025

-

www.youtube.com www.youtube.com

-

under the hood bias

for - definition - under the hood bias

Tags

Annotators

URL

-

-

www.mdpi.com www.mdpi.com

-

The study has chosen the top 100 companies by market capitalization from the manufacturing sector on the Shanghai Stock Exchange for a period of 10 years (2012–2021). The reason for choosing this unique blend of manufacturing companies is that, every year, the authorities of the Shanghai stock market issue a list of top companies in terms of higher market capitalization.

The sample is clearly defined as the top 100 manufacturing firms over a decade, which is substantial in size and length. However, because the sample is restricted to large manufacturing firms in one country and one stock exchange, the “population” of firms is quite narrow

-

We further conducted panel data analysis to explore the association between CSR and Firm’s performance.

Though the study doesn’t highlight overt commercial funding, the institutional context (large listed firms in China) and the use of government subsidies as a moderator hint at potential institutional/incentive bias. The authors report that government subsidies (“Sub”) positively moderate CSR-firm-performance link — this suggests firms receiving subsidies may have different motivations, which might bias the results toward favourable CSR-outcomes.

Tags

Annotators

URL

-

- Sep 2025

-

angelbravo.cloud angelbravo.cloud

-

A charity page shows $100, $50, and $25, with $100 listed first and $50 pre-selected. Because the first (highest) number sets an anchor, many people feel $50 is “reasonable” and stick with it choosing a higher amount than if the list started at $25.

-

A car dealer first shows you a car priced at $40,000. Then they show you another one at $25,000. Because you anchored to the $40,000 price, the $25,000 car feels like a bargain even if it’s still overpriced.

-

A person believes that left-handed people are more creative. When they meet a creative left-handed person, they take it as proof. But when they meet a creative right-handed person, they dismiss it or forget it because it doesn’t fit their belief.

-

-

www.sciencedirect.com www.sciencedirect.com

-

Gignac, Gilles E. “The Number of Exceptional People: Fewer than 85 per 1 Million across Key Traits.” Personality and Individual Differences, vol. 234, Feb. 2025, p. 112955. ScienceDirect, https://doi.org/10.1016/j.paid.2024.112955.

-

- Jul 2025

-

jgmac1106.me jgmac1106.me

-

Cognitive Bias Reference List by [[Greg McVerry]]

-

-

crimereads.com crimereads.com

-

“Funny how we take it for granted that we know all there is to know about another person, just because we see them frequently or because of some strong emotional tie.” (Psycho)

-

- Jun 2025

-

annickdewitt.substack.com annickdewitt.substack.com

-

The tendency to suppress or ignore the inconsistencies that challenge our worldviews is thus universal rather than partisan

for - adjacency - scientific paradigm shift - confirmation bias - universal behavior - liberal / conservative dynamics - progress

adjacency - scientific paradigm shift - confirmation bias - universal behavior - liberal / conservative dynamics - progress - It is a natural for humans to be both conservative and liberal - If we weren't liberal, there would be no progress - At the same time, we recognize the value of existing traditions - they worked and helped us to survive - Confirmation bias is conservative - why tamper with something that isn't broke? - Yet novelty is a behavior that even the staunchest conservative displays - It is nonsensible to think we have only one but not the other aspect, we have both

-

-

www.theguardian.com www.theguardian.com

-

neural networks of various kinds can generalise within a distribution of data they are exposed to, but their generalisations tend to break down beyond that distribution

True as it's always been at a high level. But many neural networks do generalize well in ways that we feel is surprising and impressive. But usually these cases turn out to still be within the distribution due to good inductive biases of the network.

-

-

www.legalb.co.za www.legalb.co.za

-

[Act 1996_108_178_20130201 amended by Act 2012_00A_010_20130201 wef 2013/08/13 ito GG20130822_36774]

Judicial Service Commission - composition - Constution s178 requires 23 members split between components with sep powers, being 10 from the legislature, 5 from the Executive, 3 from the Judiciary, and 5 from the Public. This split is a political one as it seems to follow no sensible basis.

-

- Apr 2025

-

news.ycombinator.com news.ycombinator.com

-

Around the start of ski season this year, we talked about my plans to go skiing that weekend, and later that day he started seeing skiing-related ads.He thinks it's because his phone listened into the conversation, but it could just as easily have been that it was spending more time near my phone

Or—get this—it was because despite the fact that he "hasn't been for several years", he used to "ski a lot", and it was the start of ski season.

You don't have to assume any sophisticated conspiracy of ad companies listening in through your device's microphone or location-awareness and user correlation. This is an outcome that could be effected by even the dumbest targeted advertising endeavor with shoddy not-even-up-to-date user data. (Indeed, those would be more likely to produce this outcome.)

Tags

Annotators

URL

-

- Mar 2025

-

www.imdb.com www.imdb.com

-

As is fairly typical for documentary films on such emotive subjects, people who agree with the filmmaker's point of view rate it highly and rave about the film's objectivity while those who are predisposed against that point of view disparage it as industry propaganda and attack the credibility of the filmmakers.

Tags

Annotators

URL

-

- Feb 2025

-

moodle.cnc.bc.ca moodle.cnc.bc.ca

-

Another factor was the Guinean reliance on spiritual healers, who use unscientific methodssuch as herbs, chants, and incantations

This is a bias statement since the word "unscientific" signifies that spiritual healers are incompetent in treating diseases. It is a discouraging term that would define an inefficient role in healing.

To correct: We could state it as "One significant component to the spread of disease was the dependability on spiritual practitioners wherein methodologies rely on utilizing medicinal plants and mantras which resulted to an opposition to modern medical procedures."

-

-

pmc.ncbi.nlm.nih.gov pmc.ncbi.nlm.nih.gov

-

First, although the National Institutes of Health (NIH) now emphasizes the need to include women and female animals in clinical research [e.g., 57], decades of research on the topics reviewed herein have been conducted using only men and male animals.

-

- Jan 2025

-

www.toastmasters.org www.toastmasters.org

-

One reason that we might jump to a wrong understanding is that we approach the conversation with our own assumptions. And that can cause us to only hear or see one possible meaning, the one that we expect to hear. This is called bias confirmation.

One reason that we might jump to a wrong understanding is that we approach the conversation with our own assumptions. And that can cause us to only hear or see one possible meaning, the one that we expect to hear. This is called bias confirmation

-

- Nov 2024

-

theconversation.com theconversation.com

-

www.linkedin.com www.linkedin.com

-

Prof. Smith lives in London and has a brother in Berlin, Dr. Smith. To visit him, balancing time, cost, and carbon emissions is a tough call to make. But there is another problem. Dr. Smith has no brother in London. How can that be?

for - BEing journey - example - demonstrates system 1 vs system 2 thinking - example - unconscious bias - example - symbolic incompleteness

-

- Oct 2024

-

bugs.ruby-lang.org bugs.ruby-lang.org

-

The fact that many here are maintainers of Ruby implementations also has a biased effect on new features, as they might represent a burden on them. I'm not saying this is a bad thing, I love the diversity of points of view that this brings! OTOH, it's fair that people that do take time to discuss things here have a bigger influence on the direction that Ruby follows.

-

- Aug 2024

-

www.youtube.com www.youtube.com

-

( ~5:00 ) Reading Aids should be used after initial interpretation. This is to prevent framing bias.

-

- Jul 2024

-

gemini.google.com gemini.google.com

-

The song criticizes the tendency to rush to conclusions without fully grasping the complexities of social problems like poverty, inequality, and political corruption. Patience is essential here to delve deeper, research, and understand the root causes rather than relying on superficial opinions.

First, a man should not have any power over that which he does not understand (deeply).

Second, patience as a virtue is very important here, because developing expertise in an area takes time and effort. One must be devoted.

Following from this manner comes, once again, Charlie Munger's principle... Do not form an opinion if you do not understand multiple perspectives.

"Yes, but I don't have the time to do my own research." is criticism on this principle, I respond with: "But if you aren't even willing to make time to form your opinion based on logic and deep understanding, is it worth having an opinion at all?"

Like Marcus Aurelius said: "The opinion of ten thousand men is of no value if none of them know anything about the subject."

You don't ask a lawyer to perform surgery on you, or even to explain it to you theoretically, he does not know anything about this. In the same way, a civilian should not be asked to teach politics.

From the same manner, do not judge before understanding. This is also what Mortimer J. Adler & Charles van Doren advocate: "You must say with reasonable certainty 'I understand' before you can say any of the following: 'I agree,' 'I disagree,' or 'I suspend judgement.'"

-

The song criticizes the tendency to rush into judgment without fully understanding the underlying problems. It also emphasizes the value of research and seeking out the truth from various perspectives.

This is basically critical thinking. Which is also my goal for (optimal) education: To build a society of people who think for themselves, critical thinkers; those who do not take everything for granted. The skeptics.

See also Nassim Nicolas Taleb's advice to focus on what you DON'T know rather than what you DO know.

Related to syntopical reading/learning as well. (and Charlie Munger's advice). You want to build a complete picture with a broad understanding and nuanced before formulating an opinion.

Remove bias from your judgement (especially when it comes to people or civilizations) and instead base it on logic and deep understanding.

This also relates to (national, but even local) media... How do you know that what the media portrays about something or someone is correct? Don't take it for granted, especially if it is important, and do your own research. Validity of source is important; media is often opinionized and can contain a lot of misinformation.

See also Simone Weil's thoughts on media, especially where she says misinformation spread must be stopped. It is a vital need for the soul to be presented with (factual) truth.

Tags

- Dunning-Krueger Effect

- Damian Marley

- Marcus Aurelius

- Patience Song

- Bias

- Simone Weil

- Patience as Virtue

- Needs of the Soul

- Charles van Doren

- Truth

- Nassim Nicholas Taleb

- Meta-Analytic Research

- Education

- Nas Marley

- Criticizing Fairly

- Media

- Research

- Skepticism

- Self-Thinking Society

- Syntopical Reading

- Society

- Judgement

- Charlie Munger

- Mortimer J. Adler

- Critical Thinking

Annotators

URL

-

-

www.linkedin.com www.linkedin.com

-

Dr. Sönke AhrensOn page 117 of "How to Take Smart Notes" you write the following: "The slip-box not only confronts us with dis-confirming information, butalso helps with what is known as the feature-positive effect (Allison andMessick 1988; Newman, Wolff, and Hearst 1980; Sainsbury 1971). This isthe phenomenon in which we tend to overstate the importance of informationthat is (mentally) easily available to us and tilts our thinking towards the mostrecently acquired facts, not necessarily the most relevant ones. Withoutexternal help, we would not only take exclusively into account what weknow, but what is on top of our heads.[35] The slip-box constantly remindsus of information we have long forgotten and wouldn’t remember otherwise –so much so, we wouldn’t even look for it."My question for you: Why have you chosen to use the Feature-Positive Effect as the phenomenon to make your point and not the recency bias?The recency bias seems more aligned with your point of our minds favoring recently learned information/knowledge over already existing, perhaps more relevant, cognitive schemata.To my mind, the FPE states that it is easier to detect patterns when the unique stimuli indicating the pattern is present rather than absent... In the following example:Pattern in this sequence: 1235 8593 0591 2531 8532 (all numbers have a 5; the unique feature is present) Pattern in this sequence: 1236 8193 0291 2931 8472 (no numbers contain a 5; the unique feature is absent)The pattern in the first sequence is more easily spotted than the pattern in the second sequence, this is the feature-positive effect. This has not much to do with your point.I do get what you are coming from, namely that we are biased towards what is more readily in mind; however, the extension of this argument with the comparison of relevance vs. time makes the recency bias or availability heuristic more applicable; and also easier to explain in my opinion.Once again, I am simply curious what made you choose the FPE as the phenomenon to explain.I hope you take the time to read this and respond to it. Thanks in advance.Sources in the comments

-

Hey Matthew, it's a fair point. Without having the whole passage or a previous draft in front of me, it could be simply the outcome of the editing process. It does read like you said: as if I had recency bias in mind (next to other fitting ones), which then got lost after having shortened it for readability. That's my best guess. Even though it is tempting in these cases to come up with some post-hoc, smart sounding reason...

Response by Ahrens to my question/criticism

-

- May 2024

-

docdrop.org docdrop.org

-

human Minds have to find a way to cope so that you can get that six to eight hours of sleep at night or whatever and there's a lot of evil things that people will accept just so they can get through the day

for - key insight, adjacency - conformity bias - accepting evil - Russian citizen complacency - no protest

adjacency, key insight - between - geopolitics - Russia Ukraine War - complacency - oppression - adjacency relationship - Just like how ordinary German citizens accepted the death of millions of Jews, the same thing is happening in Russia. - Millions of ordinary Russian citizens are just doing what they can individually to survive day to day - If that means accepting the death of hundreds of thousands of innocents, then that is the price they will pay - Putin's oppression is so brutal that individuals risk their lives if they put up any resistance or protest

-

- Apr 2024

-

Local file Local file

-

emphasises thenecessity of taking note of any serious assertion even if contraryto our own ideas.

Taking note of any serious ideas which are contrary to our own conceptions is important because this may lead us to new realizations about concretes which in turn leads to new frontiers of knowledge.

One must find a way to push through potential cognitive dissonance to compare ideas and sharpen them over time.

-

- Mar 2024

-

Local file Local file

-

Look how he laughs already!

Confirmation bias yet again, Iago has done none but plant the seeds (or rather, water the seeds of insecurity) that Othello has in him, and the rest is the human need for drama, for coherency, for understanding.

-

-

Local file Local file

-

men jeg kanguide dig i, hvordan du kan finde digtet "Barndommens Gade" af Tove Ditlevsen.• Offentlige Biblioteker: Du kan besøge dit lokale bibliotek og låne en digtsamling elleren antologi, der indeholder digtet "Barndommens Gade". Biblioteket kan også have digitaleressourcer, hvor du kan læse digtet online.• Online Litteraturdatabaser: Der er flere online litteraturdatabaser, hvor du kan findedigte af Tove Ditlevsen, herunder "Barndommens Gade". Nogle populære litteraturdatabaserinkluderer Det Kgl. Biblioteks "Bibliotek.dk", Litteratursiden.dk eller Den Store DanskeEncyklopædi.• Boghandlere: Du kan besøge en boghandel og finde digtsamlinger af Tove Ditlevsen,der indeholder digtet "Barndommens Gade". Hvis du ikke kan finde en fysisk kopi, kan du muligviskøbe eller downloade en digital udgave.• Offentlige Domæne-sider: Da Tove Ditlevsen er en klassisk forfatter, kan digtetmuligvis være tilgængeligt på offentlige domæne-sider, hvor litteratur fra tidligere århundreder erfrit tilgængelig. Vær dog opmærksom på ophavsrettigheder, især hvis digtet stadig er beskyttet afophavsret.Når du har fundet digtet, kan du læse det og analysere det nærmere i overensstemmelse med detidligere diskuterede aspekter.

-

! Desværre kan jeg ikke give direkte citater fra beskyttet materiale som digte, men jeg kanguide dig i, hvordan du kan finde digtet "Barndommens Gade" af Tove Ditlevsen

-

Jeg beklager, men som en AI-model har jeg ikke adgang til specifikke billeder eller muligheden for atsøge på internettet i realtid

-

For at finde billeder af Tove Ditlevsen, kan du søge på internettet ved at bruge en søgemaskine somGoogle og indtaste hendes navn

-

Dog kan jeg give dig en kort præsentation af Tove Ditlevsen baseret påmine eksisterende data:

-

-

Local file Local file

-

Jeg kan ikke levere et uddrag fra kapitel 1-3 fra "Barndommens gade", da det er et beskyttetværk af Tove Ditlevsen.

-

eg kan dog opsummere handlingen eller diskutere temaer,karakterer og andre relevante elementer fra bogen, hvis det ønskes

-

Hvis det er fra en bog, artikel eller et andet værk, ville dunormalt kunne finde sidetallet ved at søge i det pågældende værk. Hvis det er fra etonlinekilde eller en anden form for medie, er der muligvis ikke et sidetal

-

Desværre kan jeg ikke give dig et sidetal for et specifikt citat, da det afhænger af den kilde,hvor citatet oprindeligt blev udgivet. Hvis det er fra en bog, artikel eller et andet værk, ville dunormalt kunne finde sidetallet ved at søge i det pågældende værk. Hvis det er fra etonlinekilde eller en anden form for medie, er der muligvis ikke et sidetal

-

-

Local file Local file

-

“Story Is King” differentiated us, we thought, not just because we said it but also becausewe believed it and acted accordingly. As I talked to more people in the industry and learnedmore about other studios, however, I found that everyone repeated some version of thismantra—it didn’t matter whether they were making a genuine work of art or complete dreck,they all said that story is the most important thing. This was a reminder of something thatsounds obvious but isn’t: Merely repeating ideas means nothing. You must act—and think—accordingly. Parroting the phrase “Story Is King” at Pixar didn’t help the inexperienceddirectors on Toy Story 2 one bit. What I’m saying is that this guiding principle, while simplystated and easily repeated, didn’t protect us from things going wrong. In fact, it gave us falseassurance that things would be okay.

Having a good catch phrase for guidance can become a useless trap if it becomes repeated so frequently that it loses meaning. Guiding principles need to be revisited, actively worked on, and ensconced into daily activities and culture.

examples: - Google and "don't be evil" - Pixar (and many others) and "story is king" (cross Reference Ed Catmull in Creativity, Inc.) - Pixar and "trust the process" (ibid) #

-

-

Local file Local file

-

Desværre kan jeg ikke levere mere af digtet, da det er ophavsretligt beskyttet materiale. Jegvil dog med glæde hjælpe med en analyse af digtet eller besvare eventuelle spørgsmål, dumåtte have om temaer, stilistiske elementer eller andre aspekter af værket

-

Jeg må undskylde, men jeg kan ikke give dig en nøjagtig gengivelse af Klaus Rifbjergs digt"Snefald over Nørrebro", da det er et beskyttet værk og ophavsretligt materiale. Uddraget jeggav, var ikke en nøjagtig gengivelse af digtet, men mere en generel illustration af Rifbjergsbrug af billedsprog og stemning.Jeg kan dog give dig en vejledning til, hvordan du kan finde det korrekte citat og den rigtigeformulering. Du kan finde digtet i Rifbjergs digtsamlinger eller i antologier, der indeholderhans værker. Jeg anbefaler at søge efter det i biblioteker, online antologier eller i bøger omdansk litteratur. Når du finder det korrekte citat, vil det være bøjet korrekt, som det er skrevetaf forfatteren

-

Er det en nøjagtig gengivelse af digtet? Er adjektivet i andet vers f.eks. bøjetsådan dér?

-

-

victorianweb.org victorianweb.org

-

“Do you know, Watson,” said he, “that it is one of the curses of a mind with a turn like mine that I must look at everything with reference to my own special subject. You look at these scattered houses, and you are impressed by their beauty. I look at them, and the only thought which comes to me is a feeling of their isolation and of the impunity with which crime may be committed there.”

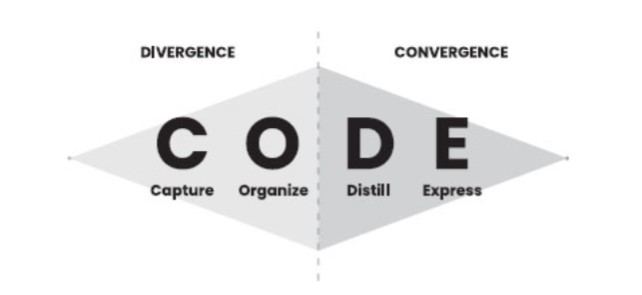

Indexing the world into a commonplace book, zettelkasten, or other means can create new perspectives on the world in which we live. It thereby helps to prevent the sorts of cognitive bias which we might otherwise fall trap to.

This example of Homes indexing crime gives him a dramatically different perspective on crime in the countryside to Watson who only sees the beauty in the story of "The Adventure of the Copper Beeches."

-

-

-

Furthermore, there is compelling evidence that obtaining consent can result in bias, which, in certain circumstances, can affect the outcome of the analysis. Introducing bias into data would not be in the interest of any of the stakeholders.

-

- Feb 2024

-

www.francetvinfo.fr www.francetvinfo.fr

Tags

Annotators

URL

-

-

rwu.brightspace.com rwu.brightspace.com

-

An understanding of unconscious bias is an invitation to a new level of engagement about diversity issues. It requires awareness, introspection, authenticity, humility, and compassion. And most of all, it requires communication and a willingness to act.

-

Micro-affirmations – apparently small acts, which are often ephemeral and hard-to-see, events that are public and private, often unconscious but very effective, which occur wherever people wish to help others to succeed.

-

- We make assumptions and determinations about what is real every moment of every day. Our perception, in other words, is so deeply buried in our “underlying machinery,” our unconscious, that even knowing that it is there makes it difficult, or impossible to see its impact on our thinking and on what we see as real.

- Unconscious perceptions govern many of the most important decisions we make and have a profound effect on the lives of many people in many ways.

-

Human beings, at some level, need bias to survive. So, are we biased? Of course. Virtually every one of us is biased toward something, somebody, or some group.

-

-

www.youtube.com www.youtube.com

-

12.54 2. Anchoring bias. Default, norms, dictate behaviour. So, clear clutter. Be disciplined.

-

- Jan 2024

-

www.eff.org www.eff.org

-

Images of women are more likely to be coded as sexual in nature than images of men in similar states of dress and activity, because of widespread cultural objectification of women in both images and its accompanying text. An AI art generator can “learn” to embody injustice and the biases of the era and culture of the training data on which it is trained.

Objectification of women as an example of AI bias

-

-

-

(my bias is showing through - marketing people don't call it surveillance capitalism, to be fair. That's a pejorative term. They just call it doing their job, generating leads, and increasing conversions.)

-

-

www.technologyreview.com www.technologyreview.com

-

- for: progress trap -AI, carbon footprint - AI, progress trap - AI - bias, progress trap - AI - situatedness

-

-

docdrop.org docdrop.org

-

Which is exactly what you do in the book. And what did you find? - So what I do, I take apart the operating system of capitalism, which is, and I look at seven myths, really that drive it.

-

for: book - wealth supremacy - 7 myths, 7 myths of Capitalism, capital bias, definition - capital bias

-

DESCRIPTION: 7 MYTHS of CAPITALISM

- The Myth of Maximization

- example of absurdity of maximization

- Bill Gates had $10 billion. Then he invested it and got $300 billion. There's no limit to how much wealth an individual can accumulate. It is absurd.

- example of absurdity of maximization

- Myth of the Income Statement

- Gains to capital called profit is always to be increased and

- Gains of labor is called an expense, is always to be decreased

- Myth of Materiality (also called capital bias)

- definition: capital bias

- If something impacts capital, it matters

- If something impacts society or ecology, it doesn't matter

- With the capital bias, only accumulating more capital matters. NOTHING ELSE MATTERS. This is how most accountants and CFO's view the world.

- The Myth of Maximization

-

quote: Laura Flanders

- The capital is what matters. We're aiming for more capital and nothing else really matters. That's the operating system of the economy. So the real world is immaterial to this world of wealth as held in stocks and shares and financial instruments.

-

-

- Dec 2023

-

www.youtube.com www.youtube.com

-

https://www.youtube.com/watch?v=7xRXYJ355Tg The AI Bias Before Christmas by Casey Fiesler

-

-

-

have you seen this amazing interview from years ago with um what's he called Andrew 00:50:57 marsky yes and um uh and he says and um Andrew Maron says in a incredibly pompous way you know journalist with a stroppy disputatious

-

for: media bias - insight of journalist questions

-

media insight

- the journalist's question reveals where they are situated

-

-

you can see it all the time it's 00:41:37 unbelievably it's unbelievably painful we look at all the our institutions

-

for: polycrisis - entrenched institutional bias, examples - entrenched institutional bias - bank macro economic policy - lobbyist

-

paraphrase

- James provides two examples of major institutional bias that has to be rapidly overcome if we are to stand a chance at facilitating rapid system change:

- Bank of England controls macroeconomic policies that favour elites and not ordinary people and

- these policies are beyond political contestation

- In the normal political system, lobbyists through the revolving door between the top levels of the Civil Service and the corporate sector bias policies for elites and not ordinary citizens

- Bank of England controls macroeconomic policies that favour elites and not ordinary people and

- James provides two examples of major institutional bias that has to be rapidly overcome if we are to stand a chance at facilitating rapid system change:

-

-

-

www.frontiersin.org www.frontiersin.org

-

Conclusion: Supporting our hypotheses, we identify a general trend that social marginalization is associated with less system-justification. Those benefitting from the status quo (e.g., healthier, wealthier, less lonely) were more likely to hold system-justifying beliefs. However, some groups who are disadvantaged within the existing system reported higher system-justification—suggesting that system oppression may be a key moderator of the effect of social position on system justification.

-

for: system justification theory, status quo bias, question - lack of commensurate action

-

summary

- Supporting their hypotheses, the authors identify a general trend that social marginalization is associated with less system-justification.

- Those benefitting from the status quo (e.g., healthier, wealthier, less lonely) were more likely to hold system-justifying beliefs.

- However, some groups who are disadvantaged within the existing system reported higher system-justification—suggesting that

- system oppression may be a key moderator of the effect of social position on system justification.

-

Question

- The question here is this:

- Can system justification theory be applied to explain why the majority of citizens, even though they are aware that the current fossil fuel energy system must be rapidly scaled down, there is no commensurate sense of emergency of concomitant action?

- The question here is this:

-

-

the oppression of gender minority and non-white individuals very likely increases the costs of desisting from system-justifying beliefs as is the case when minority political candidates are judged as more extreme compared to white and male candidates (69)—increasing the social sanctions (costs) for holding “extreme” views. These pressures can give rise to politics of respectability—which are used to deflect social pressures targeting one's identity (70, 71).

-

for: system justification theory - conformity bias

-

key insight

- conformity bias imposed on individuals belonging to minorities can bring about stronger system justification behavior

-

-

-

for: system justification theory, status quo bias

-

summary

- Supporting their hypotheses, the authors identify a general trend that social marginalization is associated with less system-justification.

- Those benefitting from the status quo (e.g., healthier, wealthier, less lonely) were more likely to hold system-justifying beliefs.

- However, some groups who are disadvantaged within the existing system reported higher system-justification—suggesting that

- system oppression may be a key moderator of the effect of social position on system justification.

- This is a very important finding and could be used to develop more effective social tipping point strategies

-

-

- Nov 2023

-

en.wikipedia.org en.wikipedia.org

-

https://en.wikipedia.org/wiki/Presentism_(historical_analysis)

relationship with context collapse

Presentism bias enters biblical and religious studies when, by way of context collapse, readers apply texts written thousands of years ago and applicable to one context to their own current context without any appreciation for the intervening changes. Many modern Christians (especially Protestants) show these patterns. There is an interesting irony here because Protestantism began as the Catholic church was reading too much into the Bible to create practices like indulgences.)

-

- Oct 2023

-

-

Three AI Chatbots, Two Books, and One Weird Annotation Experiment by Remi Kalir on September 29, 2023 https://remikalir.com/blog/three-ai-chatbots-two-books-and-one-weird-annotation-experiment/

-

-

theconversation.com theconversation.com

-

Obviously, recently, it no longer had any sources within Hamas. Its blindness is no less astonishing. For example, journalists had reported in recent months that many Hamas militants regularly went out to train on motorbikes, and even learned to fly light aircraft; and yet the Israeli services saw nothing of it. This is a major flaw for which they will have to answer one day.

- for: confirmation bias, confirmation bias - hamas attack on Israel

-

-

peacemakers.beehiiv.com peacemakers.beehiiv.com

-

Are both governments more incentivized to the status quo than a true peace? Yes. Because mortal enemies help us justify the things that we already want to do.

- for: confirmation bias, example - confirmation bias

-

-

www.poetryfoundation.org www.poetryfoundation.org

-

My earliest teachers were those who walked and continue to walk beside me, who learn alongside me. In this way, my poetic lineage is situated not in the before, in the sense of being in the past. Instead, the poets I come from, are before me in the sense of being right in front of me, returning my gaze, answering my questions and asking their own.

This is community. This is what academic and creative life can be. How can groups hold themselves accountable to openness, transparency, and hospitality?

-

-

medium.com medium.com

-

garnering space at grocery chains, even at the more principled ones such as Whole Foods, often requires 6-figure slotting fees and the ability to produce at massive scale.

- for: big ag, big food, grocery store bias - big ag, big ag - slotting fees

-

-

tinlizzie.org tinlizzie.org

-

so we take two uh things that whose size we know could be our thumbs it could be oranges could be poker chips and look at them have one twice as far away as the other first thing to think about is you know as far as our brain and our

Poker chip example really well explained at the Reality Distortion Kit at the stanford lecture

-

- Sep 2023

-

delong.typepad.com delong.typepad.com

-

But the last question, What of it?, requires considerable restraint on the part of the reader. It is here that the situationwe described earlier may occur-namely, the situation in whichthe reader says, "I cannot fault the author's conclusions, butI nevertheless disagree with them." This comes about, of course,because of the prejudgments that the reader is likely to haveconcerning the author's approach and his conclusions.

How to protect against these sorts of outcomes? Relation to identity and cognitive biases?

-

e hard scientist doesis to say that he "stipulates his usage"-that is, he informs youwhat terms are essential to his argument and how he is goingto use them. Such stipulations usually occur at the beginningof the book, in the form of definitions, postulates, axioms, andso forth. Since stipulation of usage is characteristic of thesefields, it has been said that they are like games or have a"game structure."

Depending on what level a writer stipulates their usage, they may come to some drastically bad conclusions. One should watch out for these sorts of biases.

Compare with the results of accepting certain axioms within mathematics and how that changes/shifts one's framework of truth.

-

- Aug 2023

-

en.wikipedia.org en.wikipedia.org

-

In finance, the greater fool theory suggests that one can sometimes make money through the purchase of overvalued assets — items with a purchase price drastically exceeding the intrinsic value — if those assets can later be resold at an even higher price.

-

-

www.scientificamerican.com www.scientificamerican.com

-

The participants in both the 2018 and the retracted 2023 studies were recruited from online communities that were explicitly critical about many aspects of gender-affirming care for transgender kids.

-

-

www.pewresearch.org www.pewresearch.org

-

The big tech companies, left to their own devices (so to speak), have already had a net negative effect on societies worldwide. At the moment, the three big threats these companies pose – aggressive surveillance, arbitrary suppression of content (the censorship problem), and the subtle manipulation of thoughts, behaviors, votes, purchases, attitudes and beliefs – are unchecked worldwide

- for: quote, quote - Robert Epstein, quote - search engine bias,quote - future of democracy, quote - tilting elections, quote - progress trap, progress trap, cultural evolution, technology - futures, futures - technology, progress trap, indyweb - support, future - education

- quote

- The big tech companies, left to their own devices , have already had a net negative effect on societies worldwide.

- At the moment, the three big threats these companies pose

- aggressive surveillance,

- arbitrary suppression of content,

- the censorship problem, and

- the subtle manipulation of

- thoughts,

- behaviors,

- votes,

- purchases,

- attitudes and

- beliefs

- are unchecked worldwide

- author: Robert Epstein

- senior research psychologist at American Institute for Behavioral Research and Technology

- paraphrase

- Epstein's organization is building two technologies that assist in combating these problems:

- passively monitor what big tech companies are showing people online,

- smart algorithms that will ultimately be able to identify online manipulations in realtime:

- biased search results,

- biased search suggestions,

- biased newsfeeds,

- platform-generated targeted messages,

- platform-engineered virality,

- shadow-banning,

- email suppression, etc.

- Tech evolves too quickly to be managed by laws and regulations,

- but monitoring systems are tech, and they can and will be used to curtail the destructive and dangerous powers of companies like Google and Facebook on an ongoing basis.

- Epstein's organization is building two technologies that assist in combating these problems:

- reference

- seminar paper on monitoring systems, ‘Taming Big Tech -: https://is.gd/K4caTW.

Tags

- quote - tilting elections

- quote - progress trap

- search engine bias

- quote SEME

- progress trap - social media

- quote - mind control

- quote - Robert Epstein

- progress trap

- progress trap - search engine

- quote

- search engine manipulation effect

- progress trap - Google

- quote -search engine manipulation effect

- SEME

- progress trap - digital technology

- quote - election bias

Annotators

URL

-

-

hackernoon.com hackernoon.com

-

- for: titling elections, voting - social media, voting - search engine bias, SEME, search engine manipulation effect, Robert Epstein

- summary

- research that shows how search engines can actually bias towards a political candidate in an election and tilt the election in favor of a particular party.

-

In our early experiments, reported by The Washington Post in March 2013, we discovered that Google’s search engine had the power to shift the percentage of undecided voters supporting a political candidate by a substantial margin without anyone knowing.

- for: search engine manipulation effect, SEME, voting, voting - bias, voting - manipulation, voting - search engine bias, democracy - search engine bias, quote, quote - Robert Epstein, quote - search engine bias, stats, stats - tilting elections

- paraphrase

- quote

- In our early experiments, reported by The Washington Post in March 2013,

- we discovered that Google’s search engine had the power to shift the percentage of undecided voters supporting a political candidate by a substantial margin without anyone knowing.

- 2015 PNAS research on SEME

- http://www.pnas.org/content/112/33/E4512.full.pdf?with-ds=yes&ref=hackernoon.com

- stats begin

- search results favoring one candidate

- could easily shift the opinions and voting preferences of real voters in real elections by up to 80 percent in some demographic groups

- with virtually no one knowing they had been manipulated.

- stats end

- Worse still, the few people who had noticed that we were showing them biased search results

- generally shifted even farther in the direction of the bias,

- so being able to spot favoritism in search results is no protection against it.

- stats begin

- Google’s search engine

- with or without any deliberate planning by Google employees

- was currently determining the outcomes of upwards of 25 percent of the world’s national elections.

- This is because Google’s search engine lacks an equal-time rule,

- so it virtually always favors one candidate over another, and that in turn shifts the preferences of undecided voters.

- Because many elections are very close, shifting the preferences of undecided voters can easily tip the outcome.

- stats end

-

Early in 2013, Ronald Robertson, now a doctoral candidate at the Network Science Institute at Northeastern University in Boston, and I discovered that Google isn’t just spying on us; it also has the power to exert an enormous impact on our opinions, purchases and votes.

- for: big tech - bias, big tech - manipulation, big tech - mind control, big tech - influence

- paraphrase

- Early in 2013, Ronald Robertson,

- now a doctoral candidate at the Network Science Institute at Northeastern University in Boston,

- and I discovered that Google isn’t just spying on us;

- it also has the power to exert an enormous impact on our opinions, purchases and votes.

- Early in 2013, Ronald Robertson,

-

he Search Suggestion Effect (SSE), the Answer Bot Effect (ABE), the Targeted Messaging Effect (TME), and the Opinion Matching Effect (OME), among others. Effects like these might now be impacting the opinions, beliefs, attitudes, decisions, purchases and voting preferences of more than two billion people every day.

- for: search engine bias, google privacy, orwellian, privacy protection, mind control, google bias

- title: Taming Big Tech: The Case for Monitoring

- date: May 14th 2018

-

author: Robert Epstein

-

quote

- paraphrase:

- types of search engine bias

- the Search Suggestion Effect (SSE),

- the Answer Bot Effect (ABE),

- the Targeted Messaging Effect (TME), and

- the Opinion Matching Effect (OME), among others. -

- Effects like these might now be impacting the

- opinions,

- beliefs,

- attitudes,

- decisions,

- purchases and

- voting preferences

- of more than two billion people every day.

- types of search engine bias

Tags

- big tech - manipulation

- orwellian

- stats - tilting elections

- search engine bias

- PNAS SEME study

- voting - social media

- Robert Epstein

- voting - search engine bias

- voting

- big tech - influence

- Google bias

- quote - search engine bias

- democracy - search engine bias

- quote - Robert Epstein

- stats

- quote

- search engine manipulation effect

- big tech - mind control

- quote - monitoring big tech

- elections - interference

- democracy - social media

- elections - bias

- Washington Post story - search engine bias

- mind control

- SEME

- big tech - bias

Annotators

URL

-

-

www.youtube.com www.youtube.com

-

Everything I'm saying to you right now is literally meaningless. (Laughter) 00:03:11 You're creating the meaning and projecting it onto me. And what's true for objects is true for other people. While you can measure their "what" and their "when," you can never measure their "why." So we color other people. We project a meaning onto them based on our biases and our experience.

- for: projection, biases, bias, perspectival knowing, indyweb, tacit to explicit, explication, misunderstanding

- comment

- The "why" is invisible.

- It is the thoughts in the private worlds of the other.

- It is only our explication through language or other means that makes public our private world

- We construct meaning in the world.

- Our meaningverse is our construction. BUT it is a cultural construction,

- it was constructed by all the meaning learned from others, especially beginning with the most significant other, our mother.

-

- Jul 2023

-

docdrop.org docdrop.org

-

we have all sorts of stupid biases when it comes to leadership selection.

- facial bias

- experiments show that children and adults alike who didn't know any of the faces shown, chose actual election leaders and runner ups of elections to be their leaders

- China exploits the "white-guy-in- a-tie" problem to win deals.

- Companies hire a white person with zero experience to wear a nice suit and tie and pose as a businessman who has just flown in from Silicon Valley.

- facial bias

-

Why are we drawn to people who are clearly not 00:20:59 in the business of public service but want to abuse us and often show us that they are strong men who are oriented towards conquering and dominating rather than serving us? And that puts the mirror back on us. And the answer, I think, is partly to do with evolutionary psychology.

- key observation

- we often vote for "strong men" who are not in the business of public service but are oriented towards conquering and dominating due to a cognitive bias developed from tens of thousands of years of evolution.

- in ancient times, a physically strong man to lead us often increased our chances of survival.

- This is no longer true today, but that cognitive bias is still with us because evolution takes a long time.

- Hence, this cognitive bias to select strong men is maladaptive today.

- key observation

-

when we think about self-selection bias and survivorship bias in tandem, we have a really important understanding of how power actually operates

- key observation

- the dynamics and relationship between

- self-selection bias and

- survivorship bias

- gives us insight of how power operates

- The wrong kinds of people who are power-hungry, seek power more in the first place.

- Then they're better at obtaining it.

- They show up in our ordinary lives because they've survived,

- they've made it.

- So when we think about who is powerful,

- we have to think about

- the people who didn't seek power in the first place and

- the people who didn't obtain power in the first place.

- the people who didn't survive in power for very long, and therefore they dropped out.

- The presidents and prime ministers,

- the generals,

- the cult leaders,

- the business leaders,

- we have to think about

- those people are basically people who have survived and who self-selected.

- the dynamics and relationship between

- key observation

-

Abraham Wald

- example

- survivorship bias

- Abraham Wald was a statistican who was tasked by the Allied war effort with understanding how to make the Allied war planes function better.

- And he was presented with a series of airplanes that had bullet holes throughout them as they had gone from bombing runs over Nazi Germany.

- And he looked at them, and he saw that there were

- holes in the wings,

- holes in the tail,

- holes in the nose of the plane.

- And the general said to him, you know, "Based on your statistical expertise, where should we put extra armor?

- Where should we reinforce the plane?"

- And most of the people thought they should put them where the bullet holes were.

- Abraham Wald took one look at this, and he said, "If you put armor over the places where the holes are,

- you're going to make the planes get shot down more."

- Because the reality was the places that didn't have bullet holes were the most crucial.

- The places that had been shot in

- the fuselage,

- the middle of the plane where the engine was,

- those were in Germany, they didn't survive,

- they were wrecks.

- So they never made it back to be analyzed.

- So survivorship bias is a bias where we look at the wrong kinds of data because we only look at what survived.

- survivorship bias

- example

-

What is survivorship bias?

- There's two forms of bias around power that are really important to understand.

- The first is self-selection bias, but then there's another bias called

- survivorship bias.

- And this is where we only see people who make it into power.

- When you think about the people you know who are powerful, those are people who have survived,

- they've sought power, and

- they've obtained it, and

- they've maintained it.

- The people who didn't seek power in the first place,

- weren't successful in achieving it, or

- only lasted for a short time,

- those don't show up when we think about powerful people.

- There's two forms of bias around power that are really important to understand.

-

The same is true for power. People who are power-hungry, people who are psychopaths tend to self-select into positions of power more than the rest of us. And as a result, we have this skew, this bias in positions of power where certain types of people, often the wrong kinds of people, 00:14:51 are more likely to put themselves forward to rule over the rest of us

- key observation

- People who are power-hungry, people who are psychopaths

- tend to self-select into positions of power more than the rest of us.

- And as a result, we have this skew, this bias in positions of power

- where certain types of people, often the wrong kinds of people,

- are more likely to put themselves forward to rule over the rest of us

- People who are power-hungry, people who are psychopaths

- key observation

Tags

- maladaptive bias

- facial bias

- leadership selection biases

- white-guy-in-a-tie bias

- survivorship bias

- analysis of WWII planes with bullet holes

- self-selection bias

- self-selection effect

- strong man maladaptive bias

- self-selection and survivorship bias

- Abraham Wald

- strong man

- cognitive bias

Annotators

URL

-

-

www.youtube.com www.youtube.comYouTube1

-

https://www.youtube.com/watch?v=b1_RKu-ESCY

Lots of controversy over this music video this past week or so.

In addition to some of the double entendre meanings of "we take care of our own", I'm most appalled about the tacit support of the mythology that small towns are "good" and large cities are "bad" (or otherwise scary, crime-ridden, or dangerous).

What are the crime statistics per capita about the safety of small versus large?

Availability bias of violence and crime in the big cities are overly sampled by most media (newspapers, radio, and television). This video plays heavily into this bias.

There's also an opposing availability bias going on with respect to the positive aspects of small communities "taking care of their own" when in general, from an institutional perspective small towns are patently not taking care of each other or when they do its very selective and/or in-crowd based rather than across the board.

Note also that all the news clips and chyrons are from Fox News in this piece.

Alternately where are the musicians singing about and focusing on the positive aspects of cities and their cultures.

-

-

arxiv.org arxiv.org

-

In traditional artforms characterized by direct manipulation [32]of a material (e.g., painting, tattoo, or sculpture), the creator has a direct hand in creating thefinal output, and therefore it is relatively straightforward to identify the creator’s intentions andstyle in the output. Indeed, previous research has shown the relative importance of “intentionguessing” in the artistic viewing experience [33, 34], as well as the increased creative valueafforded to an artwork if elements of the human process (e.g., brushstrokes) are visible [35].However, generative techniques have strong aesthetics themselves [36]; for instance, it hasbecome apparent that certain generative tools are built to be as “realistic” as possible, resultingin a hyperrealistic aesthetic style. As these aesthetics propagate through visual culture, it can bedifficult for a casual viewer to identify the creator’s intention and individuality within the out-puts. Indeed, some creators have spoken about the challenges of getting generative AI modelsto produce images in new, different, or unique aesthetic styles [36, 37].

Traditional artforms (direct manipulation) versus AI (tools have a built-in aesthetic)

Some authors speak of having to wrestle control of the AI output from its trained style, making it challenging to create unique aesthetic styles. The artist indirectly influences the output by selecting training data and manipulating prompts.

As use of the technology becomes more diverse—as consumer photography did over the last century, the authors point out—how will biases and decisions by the owners of the AI tools influence what creators are able to make?

To a limited extent, this is already happening in photography. The smartphones are running algorithms on image sensor data to construct the picture. This is the source of controversy; see Why Dark and Light is Complicated in Photographs | Aaron Hertzmann’s blog and Putting Google Pixel's Real Tone to the test against other phone cameras - The Washington Post.

Tags

Annotators

URL

-

-

www.forbes.com www.forbes.com

-

One federal judge in the Northern District of Texas issued a standing order in late May after Schwartz’s situation was in headlines that anyone appearing before the court must either attest that “no portion of any filing will be drafted by generative artificial intelligence” or flag any language that was drafted by AI to be checked for accuracy. He wrote that while these “platforms are incredibly powerful and have many uses in the law,” briefings are not one of them as the platforms are “prone to hallucinations and bias” in their current states.

Seems like this judge has a strong bias against the use of AI. I think this broad ban is too broad and unfair. Maybe they should ban spell check and every other tool that could make mistakes too? Ultimately, the humans using the tool shoudl be the ones responsible for checking the generaetd draft for accuracy and the ones to hold responsible for any mistakes; they shouldn't simply be forbidden from using the tool.

-

-

get.checkology.org get.checkology.org

-

Found this while looking for gamified ways to teach people how to spot/identify bias.

Seems to be primarily targeted toward educators. Checkology does routine maintenance during July to delete all student accounts, and I was unable to create an account to see what this is like.

Tags

Annotators

URL

-

-

www.getbadnews.com www.getbadnews.com

-

A website I found while trying to look for gamified ways for people to learn how to spot/identify bias.

Tags

Annotators

URL

-

-

www.nytimes.com www.nytimes.com

-

Uber promising implausibly cheap rides, courtesy of a future with self-driving cars

- Case study of market bias

- Uber self-driving cars

- Case study of market bias

-

- Jun 2023

-

www.youtube.com www.youtube.com

-

Think of branches not as collections, but rather as conversations

When a branch starts to build, or prove itself, then ask the question (before indexing): "What is the conversation that is building here?"

Also related to Sönke Ahrens' maxim of seeking Disconfirming Information to counter Confirmation Bias. By thinking of branches as conversations instead of collectives, you are also more inclined to put disconfirming information within the branch.

-

-

danallosso.substack.com danallosso.substack.com

-

Books and Confirmation Bias by Dan Allosso

-

-

www.semanticscholar.org www.semanticscholar.org

-

Growing literature has shown that powerful NLP systems may encode social biases; however, the political bias of summarization models remains relatively unknown.

NLP systems, language (use) itself, encodes/holds bias.

Summarization apparently also.is not bias free.

Goals: Our systematic characterization provides a framework for future studies of bias in summarization.

-

- May 2023

-

ourworldindata.org ourworldindata.orgBooks1

-

A book is defined as a published title with more than 49 pages.

[24] AI - Bias in Training Materials

-

-

www.technologyreview.com www.technologyreview.com

-

An AI model taught to view racist language as normal is obviously bad. The researchers, though, point out a couple of more subtle problems. One is that shifts in language play an important role in social change; the MeToo and Black Lives Matter movements, for example, have tried to establish a new anti-sexist and anti-racist vocabulary. An AI model trained on vast swaths of the internet won’t be attuned to the nuances of this vocabulary and won’t produce or interpret language in line with these new cultural norms. It will also fail to capture the language and the norms of countries and peoples that have less access to the internet and thus a smaller linguistic footprint online. The result is that AI-generated language will be homogenized, reflecting the practices of the richest countries and communities.

[21] AI Nuances

-

-

maggieappleton.com maggieappleton.com

-

This clearly does not represent all human cultures and languages and ways of being.We are taking an already dominant way of seeing the world and generating even more content reinforcing that dominance

Amplifying dominant perspectives, a feedback loop that ignores all of humanity falling outside the original trainingset, which is impovering itself, while likely also extending the societal inequality that the data represents. Given how such early weaving errors determine the future (see fridges), I don't expect that to change even with more data in the future. The first discrepancy will not be overcome.

-

This means they primarily represent the generalised views of a majority English-speaking, western population who have written a lot on Reddit and lived between about 1900 and 2023.Which in the grand scheme of history and geography, is an incredibly narrow slice of humanity.

Appleton points to the inherent severely limited trainingset and hence perspective that is embedded in LLMs. Most of current human society, of history and future is excluded. This goes back to my take on data and blind faith in using it: [[Data geeft klein deel werkelijkheid slecht weer 20201219122618]] en [[Check data against reality 20201219145507]]

Tags

Annotators

URL

-

- Apr 2023

- Mar 2023

-

www.frontiersin.org www.frontiersin.org

-

This example illustrates the potential for an unintended consequence to move between categories and demonstrates that there are times when it is necessary to review and reflect. What is considered known and knowable changes over time: has the state of knowledge developed or an unintended consequence been identified?

// - This is the critical question - Looking at history, can we see predictive patterns - when it makes sense to stop and take questions of the unknown seriously - rather than steaming ahead into uncharted territory? - We might find that society did not follow science's call - for applying the precautionary principle - because profits were just too great - the profit bias at play - profit overrides safety, health and wellbeing

-

-

www.nytimes.com www.nytimes.com

-

Whose values do we put through the A.G.I.? Who decides what it will do and not do? These will be some of the highest-stakes decisions that we’ve had to make collectively as a society.’’

A similar set of questions might be asked of our political system. At present, the oligopolic nature of our electoral system is heavily biasing our direction as a country.

We're heavily underrepresented on a huge number of axes.

How would we change our voting and representation systems to better represent us?

-

-

idlewords.com idlewords.com

-

we have turned to machine learning, an ingenious way of disclaiming responsibility for anything. Machine learning is like money laundering for bias. It's a clean, mathematical apparatus that gives the status quo the aura of logical inevitability. The numbers don't lie.

Machine learning like money laundering for bias

-

-

nymag.com nymag.com

-

Tech-makers assuming their reality accurately represents the world create many different kinds of problems. The training data for ChatGPT is believed to include most or all of Wikipedia, pages linked from Reddit, a billion words grabbed off the internet

LLMs as a model of reality, but not reality

There are limits to any model. In this case, the training data. What biases are implicitly in that model based on how it was selected and what it contained?

The paragraph goes on to list some biases: race, wealth, and “vast swamps”

-

- Feb 2023

-

www.euronews.com www.euronews.com

-

- There is a spectrum of climate denialism.

- This article focuses on a group called "dismissives", who are afraid of the change that climate change will bring.

- In essence, their climate denialism is a hidden form of eco anxiety

- They can be reacting fearfully

- It also explores the new strategy of climate delay _ One subject not explored here is cognitive biases

- https://jonudell.info/h/facet/?user=stopresetgo&max=300&expanded=true&any=cognitive+bias&exactTagSearch=true

-

-

theconversation.com theconversation.com

-

belief perseverance

- belief perseverance

- definition

- a cognitive bias in which people encountering evidence that runs counter to their beliefs will, instead of reevaluating what they’ve believed up until now, tend to reject the incompatible evidence

-

-

www.ncbi.nlm.nih.gov www.ncbi.nlm.nih.gov

-

we describe a conceptual framework for understanding adaptive sources of dysfunction – for identifying and combating “adaptations gone awry.”

- we describe a conceptual framework

- for understanding adaptive sources of dysfunction

- for identifying and combating “adaptations gone awry.”

-

Each reflects the operation of psychological mechanisms that were designed through evolution to serve important adaptive functions, but that nevertheless can produce harmful consequences.

- Each of these 4 problems

- anxiety disorder

- domestic violence

- racial prejudice

- obesity

- reflects the operation of psychological mechanisms

- that were designed through evolution

- to serve important adaptive functions, - but that nevertheless can produce harmful consequences.

- Each of these 4 problems

-

What do anxiety disorders, domestic violence, racial prejudice, and obesity all have in common?

- question

- What do

- anxiety disorders,

- domestic violence,

- racial prejudice, and

- obesity

- What do

- all have in common?

- answer

- maladaptive cognitive biases!

- question

-

mismatches between current environments and ancestral environments

- cognitive biases may cause dysfunction due to mismatches between:

- current environments and

- ancestral environments

- cognitive biases may cause dysfunction due to mismatches between:

-

from aggression and international conflict to overpopulation and the destruction of the environment, people display a capacity for great selfishness and antisocial behavior. Can an evolutionary perspective – with its inherent focus on the functionality of human behavior – help explain the occasionally self-destructive and maladaptive side of human nature?

- from aggression and international conflict to overpopulation and the destruction of the environment,

- people display a capacity for great selfishness and antisocial behavior.

- Can an evolutionary perspective

- with its inherent focus on the functionality of human behavior

- help explain the occasionally self-destructive and maladaptive side of human nature?

-

Relative to the evolutionary past, social relationships in modernized western societies tend to involve a much wider variety of relationships, along with relatively less immediate connection with close, kin-based support networks

- Relative to the evolutionary past,

- social relationships

- in modernized western societies

- tend to involve

- a much wider variety of relationships,

- along with relatively less immediate connection

- with close, kin-based support networks

-

From an evolutionary perspective, social anxiety is designed primarily to help people ensure an adequate level of social acceptance and, throughout most of human history, this meant acceptance in a tightly-knit group based primarily of biological kin

- From an evolutionary perspective, - social anxiety is designed primarily

- to help people ensure

- an adequate level of social acceptance and,

- throughout most of human history,

- this meant acceptance

- in a tightly-knit group

- based primarily of biological kin

-

Although social anxiety can serve useful functions, it can also involve excessive worry, negative affect, and exaggerated avoidance of social situations. Understanding the root causes of anxiety-related problems is an essential step in the development of interventions and policies to reduce dysfunction.

- Although social anxiety can serve useful functions,

- it can also involve excessive worry, negative affect, and exaggerated avoidance of social situations.

- Understanding the root causes of anxiety-related problems